第一步:Hadoop 官网下载地址:https://hadoop.apache.org/releases.html。

第二步:将下载hadoop-3.0.0.tar.gz 解压至指定文件夹 C:\hadoop

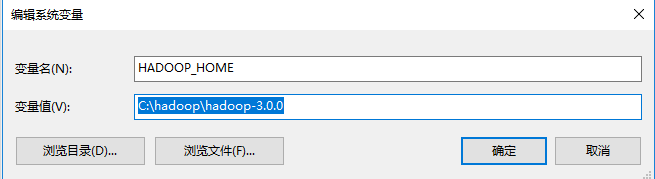

第三步:配置hadoop 涉及环境变量:

HADOOP_HOME:

PATH :

第四步:涉及Hadoop配置 :

1、修改C:/hadoop/hadoop-3.0.0/etc/hadoop/core-site.xml配置:

<configuration>

<property>

<name>fs.default.name</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

2、修改C:/hadoop/hadoop-3.0.0/etc/hadoop/mapred-site.xml配置:

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>

3、在C:/hadoop/hadoop-3.0.0目录下创建data目录,作为数据存储路径:

在C:/hadoop/hadoop-3.0.0/data目录下创建datanode目录;

在C:/hadoop/hadoop-3.0.0/data目录下创建namenode目录;

4、修改C:/hadoop/hadoop-3.0.0/etc/hadoop/hdfs-site.xml配置:

<configuration>

<!-- 这个参数设置为1,因为是单机版hadoop -->

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/D:/hadoop-3.0.0/data/namenode</value>

</property>

<property>

<name>fs.checkpoint.dir</name>

<value>/D:/hadoop-3.0.0/data/snn</value>

</property>

<property>

<name>fs.checkpoint.edits.dir</name>

<value>/D:/hadoop-3.0.0/data/snn</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/D:/hadoop-3.0.0/data/datanode</value>

</property>

</configuration>

5、修改C:/hadoop/hadoop-3.0.0/etc/hadoop/yarn-site.xml配置:

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.auxservices.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

</configuration>

6、修改C:/hadoop/hadoop-3.0.0/etc/hadoop/hadoop-env.cmd配置,找到"set JAVA_HOME=%JAVA_HOME%"替换为set JAVA_HOME=set JAVA_HOME=C:\PROGRA~1\Java\jdk1.8.0_162

7、bin目录替换,至https://github.com/steveloughran/winutils下载解压

找到对应的版本后完整替换bin目录即可

至此配置完成.

第五步:启动Hadoop 服务

启动Hadoop 格式化:C:\Users\zzg>hadoop namenode -format

进入C:\hadoop\hadoop-3.0.0\sbin 文件夹,通过start-all.cmd启动服务: C:\Users\zzg>start-all.cmd

此时可以看到同时启动了如下4个服务:

Hadoop Namenode

Hadoop datanode

YARN Resourc Manager

YARN Node Manager

第六步:Hadoop涉及应用

1、通过http://127.0.0.1:8088/即可查看集群所有节点状态:

2、访问http://localhost:9870/即可查看文件管理页面:

进入文件管理页面:

创建目录:

上传文件

上传成功

注意:在之前的版本中文件管理的端口是50070,在3.0.0中替换为了9870端口,具体变更信息来源如下官方说明 http://hadoop.apache.org/docs/r3.0.0/hadoop-project-dist/hadoop-hdfs/HdfsUserGuide.html#Web_Interface

3、通过hadoop命令行进行文件操作:

mkdir命令创建目录:hadoop fs -mkdir hdfs://localhost:9000/user

如下新增的user目录

put命令上传文件:hadoop fs -put C:\Users\songhaifeng\Desktop\11.txt hdfs://localhost:9000/user/

如下上传文件

ls命令查看指定目录文件列表:hadoop fs -ls hdfs://localhost:9000/user/