使用Java编写第一个MapReduce程序

- 演示目标

- 演示环境

- 搭建MR工程

- 配置pom.xml

- 编写WordCountMapper.java

- 编写WordCountReducer.java

- 编写启动类Startup.java

- 打包工程

- 部署MR工程

-

演示目标

编写一个MapReduce,用于计算文章中所有词语的出现次数(WordCount)。

演示环境

- 基于Hadoop2.6.5;

- 完整环境请参考以下两篇博客:

- 从0开始搭建Hadoop2.x高可用集群(HDFS篇)

- 从0开始搭建Hadoop2.x高可用集群(YARN篇)

- 上传MR计算所用的文章到HDFS中;

搭建MR工程

使用 IDEA新建一个Maven工程

配置pom.xml

<properties>

<hadoop.version>2.6.5</hadoop.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>${hadoop.version}</version>

<exclusions>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

</exclusion>

<exclusion>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

</exclusion>

<exclusion>

<groupId>javax.servlet</groupId>

<artifactId>servlet-api</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>${hadoop.version}</version>

<exclusions>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

</exclusion>

<exclusion>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

</exclusion>

<exclusion>

<groupId>javax.servlet</groupId>

<artifactId>servlet-api</artifactId>

</exclusion>

</exclusions>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>${hadoop.version}</version>

<exclusions>

<exclusion>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

</exclusion>

<exclusion>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

</exclusion>

<exclusion>

<groupId>javax.servlet</groupId>

<artifactId>servlet-api</artifactId>

</exclusion>

</exclusions>

</dependency>

</dependencies>

编写WordCountMapper.java

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.util.StringUtils;

import java.io.IOException;

public class WordCountMapper extends Mapper<LongWritable, Text, Text, IntWritable> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] strArray = StringUtils.split(value.toString(), ' ');

for (String str : strArray) {

context.write(new Text(str), new IntWritable(1));

}

}

}

编写WordCountReducer.java

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

public class WordCountReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

int count = 0;

for (IntWritable i : values) {

count += i.get();

}

context.write(key, new IntWritable(count));

}

}

编写启动类Startup.java

import nick.hadoop.mapper.WordCountMapper;

import nick.hadoop.reducer.WordCountReducer;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class Startup {

public static void main(String[] args) {

Configuration configuration = new Configuration();

try {

FileSystem fileSystem = FileSystem.get(configuration);

Job job = Job.getInstance(configuration);

job.setJarByClass(Startup.class);

job.setJobName("WordCount");

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path("/input"));

Path outputPath = new Path("/output");

if (fileSystem.exists(outputPath))

fileSystem.delete(outputPath, true);

FileOutputFormat.setOutputPath(job, outputPath);

if (job.waitForCompletion(true))

System.out.printf("Job执行成功");

} catch (Exception e) {

e.printStackTrace();

}

}

}

打包工程

- 在IDEA中打开

Project Structure(快捷键:Ctrl+Shift+Alt+S); - 依次选择下图中红框部分:

- 选择入口类(Startup.java),并将META-INF的目录修改为src目录;

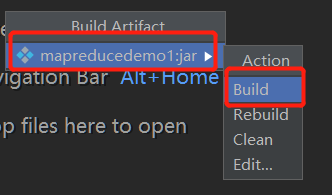

- 依次选择下图中红框部分,选择完毕后,IDEA便开始打包工程:

- 打包完毕后,可以看到打包好的jar包:

部署MR工程

使用编写好的MR工程jar包完成WordCount任务

上传jar包到服务器

将MR工程上传到Hadoop集群中的服务器:

运行jar包

使用hadoop jar xxxx.jar运行:

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)