一、背景介绍。

k8s通过master集中式管理worknode的容器编排系统,而在生产环境为了维护高可用性,master的地位起到举无轻重的作用。一旦master节点失守,则会导致整个集群服务不可用,因此配置多master集群在生产环境非常重要。

配置master集群,建议首先需要弄明白单节点master的k8s集群搭建,因为多master集群只是在单master集群的延伸。单节点master集群搭建详见:https://blog.csdn.net/wangqiubo2010/article/details/101203625

二、多master集群安排。

master集群搭建安排

| hostName |

IP |

配置 |

备注 |

| k8svip |

192.168.1.40 |

4G内存,2核2CPU,40G硬盘 |

虚拟VIP,其在keepalived中使用,非真实的虚拟机,其为master01、master02、master03提供统一的接口。 |

| master01 |

192.168.1.32 |

4G内存,2核2CPU,40G硬盘 |

虚拟机,部署master01节点、keepalived、haproxy |

| master02 |

192.168.1.34 |

4G内存,2核2CPU,40G硬盘 |

虚拟机,部署master02节点、keepalived、haproxy |

| master03 |

192.168.1.35 |

4G内存,2核2CPU,40G硬盘 |

虚拟机,部署master03节点、keepalived、haproxy |

| worknode1 |

192.168.1.33 |

|

虚拟机,部署worknode1节点 |

| keepalived |

192.168.1.32、192.168.1.34、192.168.1.35 |

|

keepalived对master节点健康检查与动态漂移 |

| haproxy |

192.168.1.32、192.168.1.34、192.168.1.35 |

|

haproxy对master节点实行动态漂移 |

三、设置hosts及ssh免密。

1、设置hosts文件。master01、master02、master03、worknode1节点均需执行。

192.168.1.40 k8svip

192.168.1.32 master01

192.168.1.34 master02

192.168.1.35 master03

192.168.1.33 worknode1

# 解决github

199.232.4.133 raw.githubusercontent.com

199.232.68.133 raw.githubusercontent.com

2、设置免密。 以下代码在master01节点执行

#设置免登录

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa &> /dev/null

ssh-copy-id root@master01

ssh-copy-id root@master02

ssh-copy-id root@master03

ssh-copy-id root@worknode1

四、多master集群部署之前置工作。

说明:以下操作均需要在所有节点执行 master01、master02、mater03、worknode1 ......

1、k8s集群部署前置工作。可参考:https://blog.csdn.net/wangqiubo2010/article/details/101203625

(1)、关闭防火墙、关闭防selinux、关闭swap(在3台机运行),以下shell文件 closeCompose.sh

#!/bin/bash

#关闭防火墙(在3台机运行)

systemctl stop firewalld && systemctl disable firewalld

#关闭selinux(在3台机运行)

sed -i 's/enforcing/disabled/' /etc/selinux/config && setenforce 0

#关闭swap(在3台机运行)

swapoff -a && sed -ri 's/.*swap.*/#&/' /etc/fstab

#时间同步(在3台机运行)

yum install ntpdate -y && ntpdate time.windows.com

(2)、安装yum,设置yum源,shell文件 yumRepos.sh。

#!/bin/bash

#文件名称,yumRepos.sh

rm -rf /etc/yum.repos.d/*

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

sed -i '/aliyuncs/d' /etc/yum.repos.d/CentOS-Base.repo

yum makecache fast

yum install -y vim wget net-tools lrzsz

cd /etc/yum.repos.d

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

(3)、配置kubernetes镜像源,yumKubernetes.sh。

#!/bin/bash

# yumKubernetes.sh,配置kubernetes的YUM镜像源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

(4)、安装kubeadm、kubelet、kubectl,本部署 安装版本为 1.16.15-0,shell文件 initKube.sh。

#!/bin/bash

#initKube.sh

#如果需要安装指定版本的kubernetes,则安装如下格式执行命令,以下命令安装的1.14.2版本

yum install -y kubelet-1.16.15-0 kubeadm-1.16.15-0 kubectl-1.16.15-0

#启动kubelet

systemctl enable kubelet && systemctl start kubelet

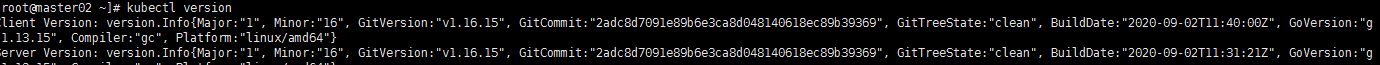

(5)、查看kubelet、kubectl安装是否成功。

kubectl version

显示以下信息标识安装成功:

2、docker安装,dockerInit.sh。

---docker 安装

#!/bin/bash

#dockerInit.sh

yum -y install docker-ce-18.06.3.ce-3.el7

systemctl start docker

systemctl enable docker

---配置docker镜像源。

vim /etc/docker/daemon.json

daemon.json增加以下镜像 :

{

"registry-mirrors":["https://m9r2r2uj.mirror.aliyuncs.com"],

"registry-mirrors": ["http://hub-mirror.c.163.com","https://docker.mirrors.ustc.edu.cn"],

"insecure-registries" : [ "172.16.8.112:5000","172.16.8.139:5000" ]

}

其中 insecure-registries 定义的为私有镜像仓库的地址,例如172.16.8.112:5000是本人的私有registry,如下图所示。关于registry的的部署安装配置后续会单独用1个章节来讲解。

---启动docker,开机启动docker,startDocker.sh。

#!/bin/bash

#startDocker.sh

#启动docker

systemctl start docker

#设置开机启动docker

systemctl enable docker

3、iptables 设置为 1。集群所有节点均需执行,否则初始化会报错。

echo "1" >/proc/sys/net/bridge/bridge-nf-call-iptables

4、查看kubernetes 需要安装组件及其版本号,拉取对应的组件镜像。

--- 查看kubernetes说需要的组件及其版本号

kubectl config images list

--- 如下图所示,则是 kubernetes 1.16.15 所需要的组件及其版本号:

--- 拉取组件镜像,shell文件名称 k8sCompose.sh:

#!/bin/bash

#k8sCompose.sh

k8sversion=v1.16.15

etcdversion=3.3.15-0

dnsversion=1.6.2

# k8s

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:$k8sversion

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:$k8sversion k8s.gcr.io/kube-apiserver:$k8sversion

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:$k8sversion

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:$k8sversion

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:$k8sversion k8s.gcr.io/kube-controller-manager:$k8sversion

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:$k8sversion

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:$k8sversion

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:$k8sversion k8s.gcr.io/kube-scheduler:$k8sversion

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:$k8sversion

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:$k8sversion

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:$k8sversion k8s.gcr.io/kube-proxy:$k8sversion

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:$k8sversion

# etcd

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:$etcdversion

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:$etcdversion k8s.gcr.io/etcd:$etcdversion

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:$etcdversion

# coredns

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:$dnsversion

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:$dnsversion k8s.gcr.io/coredns:$dnsversion

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:$dnsversion

# pause

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1 k8s.gcr.io/pause:3.1

docker rmi registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.1

五、Keepalived + Haproxy 集群搭建。

1、本集群总共涉及到3个文件。

- keepalived:keepalived.conf、check_apiserver.sh,其中keepalived.conf为keepalived配置文件,check_apiserver为健康检查文件,在keepalived.conf中会对其进行关联。

- haproxy:haproxy.cfg,haproxy的配置文件。

2、keepalived.conf 解析。

2、check_apiserver.sh 解析。

3、haproxy.cfg 解析。

4、keepalived.conf、check_apiserver.sh、haproxy.cfg 配置文件内容如下。

#!/bin/bash

#check_apiserver.sh

APISERVER_VIP=192.168.1.40

APISERVER_DEST_PORT=6443

errorExit() {

echo "*** $*" 1>&2

exit 1

}

curl --silent --max-time 2 --insecure https://localhost:${APISERVER_DEST_PORT}/ -o /dev/null || errorExit "Error GET https://localhost:${APISERVER_DEST_PORT}/"

if ip addr | grep -q ${APISERVER_VIP}; then

curl --silent --max-time 2 --insecure https://${APISERVER_VIP}:${APISERVER_DEST_PORT}/ -o /dev/null || errorExit "Error GET https://${APISERVER_VIP}:${APISERVER_DEST_PORT}/"

fi

#记得给此文件执行权限

#chmod +x /etc/keepalived/check_apiserver.sh

#需要修改的参数

#APISERVER_VIP 虚拟ip

#keepalived.conf

! /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id LVS_DEVEL

}

vrrp_script check_apiserver {

script "/etc/keepalived/check_apiserver.sh"

interval 3

weight -2

fall 10

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface ens32

virtual_router_id 51

priority 200

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.1.40/24

}

track_script {

check_apiserver

}

}

#需要按需修改的参数

#state MASTE/SLAVE

#interface 主网卡名称

#虚拟id

#优先级priority

#virtual_ipaddress 虚拟ip

# /etc/haproxy/haproxy.cfg

#---------------------------------------------------------------------

# Global settings

#---------------------------------------------------------------------

global

log /dev/log local0

log /dev/log local1 notice

daemon

#---------------------------------------------------------------------

# common defaults that all the 'listen' and 'backend' sections will

# use if not designated in their block

#---------------------------------------------------------------------

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8

option redispatch

retries 1

timeout http-request 10s

timeout queue 20s

timeout connect 5s

timeout client 20s

timeout server 20s

timeout http-keep-alive 10s

timeout check 10s

#---------------------------------------------------------------------

# apiserver frontend which proxys to the masters

#---------------------------------------------------------------------

frontend apiserver

bind *:8443

mode tcp

option tcplog

default_backend apiserver

#---------------------------------------------------------------------

# round robin balancing for apiserver

#---------------------------------------------------------------------

backend apiserver

option httpchk GET /healthz

http-check expect status 200

mode tcp

option ssl-hello-chk

balance roundrobin

server master01 192.168.1.32:6443 check

server master02 192.168.1.34:6443 check

server master03 192.168.1.35:6443 check

# [...]

# hostname ip:prot 按需更改

5、根据以上配置文件内容,在master01 上创建此文件,文件目录如下:

6、安装keepalived、haproxy,并备份配置文件。在master01、master02、master03上均需要执行。

#!/bin/bash

#installKeepHaproxy.sh

yum install -y haproxy keepalived

systemctl enable keepalived --now && systemctl start keepalived

systemctl enable haproxy --now && systemctl start haproxy

#备份配置文件

mv /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.bak

mv /etc/haproxy/haproxy.cfg /etc/haproxy/haproxy.cfg.bak

#创建keepalived.conf、check_apiserver.sh、haproxy.cfg 文件目录

mkdir /etc/home/myself/etcd

7、复制keepalived.conf、check_apiserver.sh、haproxy.cfg到指定目录。包含复制到master02、master03。shell文件keepHaproxyCopy.sh。以下在master01上执行。

#/bin/bash

#keepHaproxyCopy.sh

##https://github.com/kubernetes/kubeadm/blob/master/docs/ha-considerations.md#options-for-software-load-balancing

#复制文件到本地目录

cp /home/myself/etcd/haproxy.cfg master01:/etc/haproxy/haproxy.cfg

cp /home/myself/etcd/check_apiserver.sh master01:/etc/keepalived/check_apiserver.sh

cp /home/myself/etcd/keepalived.conf master01:/etc/keepalived/keepalived.conf

#复制文件到master02

scp /home/myself/etcd/haproxy.cfg master02:/etc/haproxy/haproxy.cfg

scp /home/myself/etcd/check_apiserver.sh master02:/etc/keepalived/check_apiserver.sh

scp /home/myself/etcd/keepalived.conf master02:/etc/keepalived/keepalived.conf

#复制文件到master03

scp /home/myself/etcd/haproxy.cfg master03:/etc/haproxy/haproxy.cfg

scp /home/myself/etcd/check_apiserver.sh master03:/etc/keepalived/check_apiserver.sh

scp /home/myself/etcd/keepalived.conf master03:/etc/keepalived/keepalived.conf

7、分别修改master01、master02、master03 上的 keepalived.conf 配置文件。如下图所示。

- master01上的keepalived.conf 配置。master01上执行。

vim /etc/keepalived/keepalived.conf

-

master02上的keepalived.conf 配置。master02上执行。

vim /etc/keepalived/keepalived.conf

-

master03上的keepalived.conf 配置。master03上执行。其配置和master02保存一致。

8、重启haproxy、keepalived。在master01、master02、master03上均需要执行。

system restart keepalived && system restart haproxy

9、测试在keepalived 配置的虚拟IP。虚拟IP必须通才行,其承担master的漂移与负载均衡。master01、master02、master03上执行。

ping 192.168.1.40

注意:如果ping 命令网络不通,请通过 ip addr 查看 以太网名称 与 keepalived.conf 文件的 interface节点是否一致。如下图所示。

六、k8s集群部署。

以上均是为了搭建高可用的k8s集群的准备性工作,当然其在整个k8s集群搭建中算是最耗时间的,以下内容将介绍k8s集群的初始化及其插件安装。

1、master01节点执行以下命令,及k8s初始化。

#--control-plane-endpoint k8svip:8443 设置master为多节点,请注意 k8svip 为keepalived设置的虚拟ip,其在hosts文件中设置为k8svip,8443为keepalived配置文件设置的监控端口

kubeadm init --control-plane-endpoint k8svip:8443 --kubernetes-version=v1.16.15 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16 --upload-certs

以上命令执行结果如下所示,请按照如图所示的步奏执行此命令:

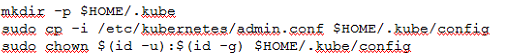

2、在master02、master03上执行上图指定的命令。在master02、master03执行成功之后,则执行以下命令。

3、worknode1节点按照上图所示的命令执行。

七、安装网络插件。

cni插件采用flannel插件。

# 获取flannel部署文件.

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# 部署:

kubectl apply -f kube-flannel.yml

执行以下命令,如下图所示所有master节点和worknode节点均 ready状态,则集群部署成功。如下图所示,master01、master02、master03、worknode1节点已经部署成功。

七、修改服务端口范围。master01、master02、master03均执行。(此操作非必须)

vim /etc/kubernetes/manifests/kube-apiserver.yaml

---

...

- --tls-private-key-file=/etc/kubernetes/pki/apiserver.key

# Add by cynen 2020-10-12

- --service-node-port-range=80-32767

...

重启kubelet

systemctl daemon-reload && systemctl restart kubelet

八、Master集群高可用测试。

1、测试用例。

| 原始数据 |

测试用例 |

预期结果 |

实测结果 |

| master01 Keepalived.conf -.> priority = 200

master02 Keepalived.conf -.> priority = 190 master03 Keepalived.conf -.> priority = 180 |

1、master01 站厅(reboot 重启) |

1、master01 reboot重启,则master01失效,因 priority值 master02 > master03,则集群切换到master02进行服务。 2、当master01 重启成功重新加入集群,则maser01继续提供服务,则master02处于待命状态。 |

符合预期,见下图所示测试结果 |

- maser01执行 ip addr,见下图所示,master01提供服务。

- 重启master01。 在worknode1 节点执行 ping 192.168.1.40 , master01 执行 reboot。

-

- 如下图所示,master01暂停服务后,同时间master02代替master01 接手 MASTER的职能。

参考链接:https://www.bilibili.com/video/BV15z4y1r7Kw?from=search&seid=10617707946254769257