问题:

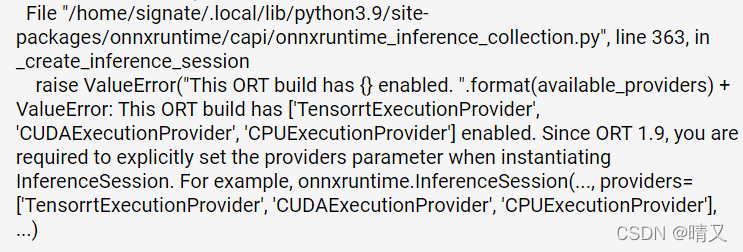

line 363, in _create_inference_session

raise ValueError("This ORT build has {} enabled. ".format(available_providers) +

ValueError: This ORT build has [‘TensorrtExecutionProvider’, ‘CUDAExecutionProvider’, ‘CPUExecutionProvider’] enabled. Since ORT 1.9, you are required to explicitly set the providers parameter when instantiating InferenceSession. For example, onnxruntime.InferenceSession(…, providers=[‘TensorrtExecutionProvider’, ‘CUDAExecutionProvider’, ‘CPUExecutionProvider’], …)

我看网上有这个方法:

将:

onnxruntime.InferenceSession(onnx_path)

改为:

onnxruntime.InferenceSession(onnx_path,providers=['TensorrtExecutionProvider', 'CUDAExecutionProvider', 'CPUExecutionProvider'])

但是我找不到onnxruntime这个函数

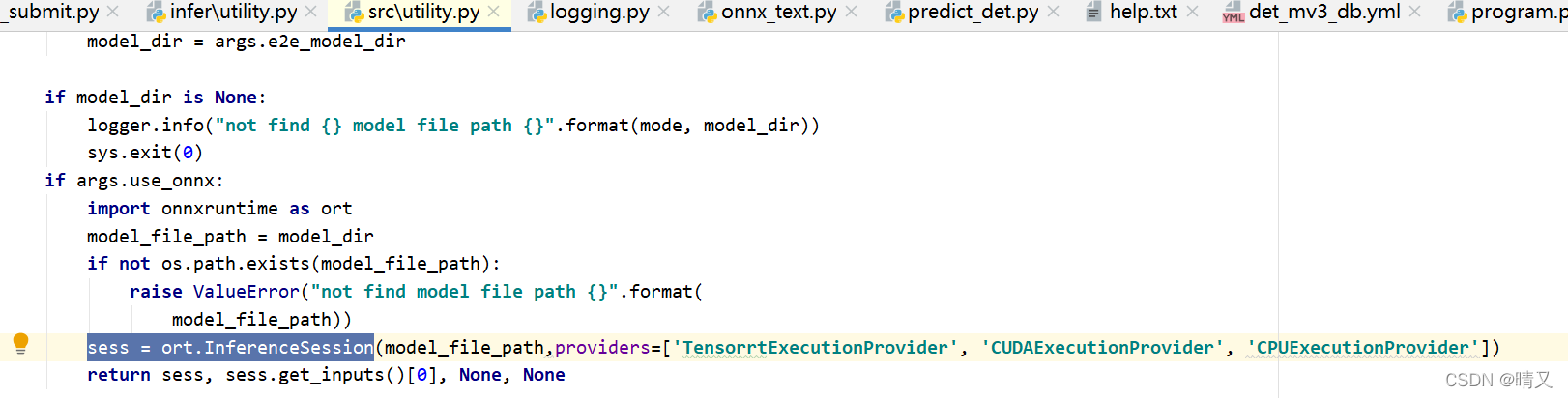

最后在utility文件(paddle工程)下找到了这里

选中的位置就是后加的,然后好用了

原:

sess = ort.InferenceSession(model_file_path)

后:

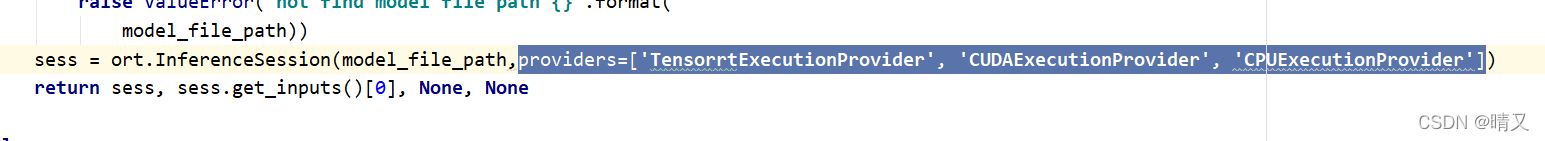

sess = ort.InferenceSession(model_file_path,providers=['TensorrtExecutionProvider', 'CUDAExecutionProvider', 'CPUExecutionProvider'])