1 Request库

import requests

r = requests.get("https://www.baidu.com/")

print(r.status_code)

print(type(r))

print(r.headers)

print(r.encoding)

print(r.apparent_encoding)

print(r.text)

print(r.content)

r.encoding = "utf-8"

print(r.text)

1.1 练习1

尽管Requests库功能很友好、开发简单(其实除了import外只需一行主要代码),但其性能与专业爬虫相比还是有一定差距的。请编写一个小程序,“任意”找个url,测试一下成功爬取100次网页的时间。(某些网站对于连续爬取页面将采取屏蔽IP的策略,所以,要避开这类网站)。

import requests

import time

def getHTMLText(url):

try:

r = requests.get(url, timeout=30)

r.raise_for_status()

r.encoding = r.apparent_encoding

return r.text

except:

return "产生异常"

# Press the green button in the gutter to run the script.

if __name__ == '__main__':

url = "http://www.baidu.com/"

# print(getHTMLText(url))

begin = time.perf_counter() # 开始计时

for i in range(100):

text = (getHTMLText(url))

# print(text)

stop = time.perf_counter() # 停止计时

runtime = stop - begin

print("爬取100次网页" + url + "共耗时:" + str(runtime)[0:4] + "s")

- 仅仅是requests库的入门知识,距离真实数据爬取还有好远;

- 考虑与计算机网络、网络安全中数据抓包等相关知识的关系;

- 与其他数据采集方法之间的关系.

1.2 练习2

京东商品页面的爬取(京东有对request对象hearder信息的识别):

import requests

# Full code

url = "https://item.jd.com/100039135656.html"

kv = {'user-agent': 'Mozilla/5.0'}

try:

r = requests.get(url, headers=kv)

r.raise_for_status()

r.encoding = r.apparent_encoding

print(r.text[:1000])

except:

print("爬取失败")

1.3 练习3

网络图片的爬取和存储:

import requests

# # Simple Code:打开path,作为文件标识符f;请求图片,返回对象r,然后把r.content写入文件标识符f;关闭文件标识符;

# path = "D://abc.jpg"

# url = "https://img.bugela.com/uploads/2021/04/26/TX9474_01.jpg"

# r = requests.get(url)

# print(r.status_code)

# with open(path, 'wb') as f:

# f.write(r.content)

# f.close()

# Full code

import os

url = "https://img.bugela.com/uploads/2021/04/26/TX9474_01.jpg"

root = "D://pics//"

path = root + url.split('/')[-1] # 自动读取最后一个反斜杠后面的内容,完成文件命名

try:

if not os.path.exists(root): # 根目录是否存在

os.mkdir(root)

if not os.path.exists(path): # 文件是否存在

r = requests.get(url)

print(r.status_code)

with open(path, 'wb') as f:

f.write(r.content)

f.close()

print("文件保存成功")

else:

print("文件已存在")

except:

print("爬取失败")

1.4 练习4

IP地址归属地的自动查询:http://m.ip138.com/ip.asp?ip=ipaddress

import requests

kv = {'user-agent': 'Mozilla/5.0'}

url = "https://m.ip138.com/ip.asp?ip="

# url = "https://user.ip138.com/ip/"

r = requests.get(url+'202.204.80.112', headers=kv)

print(r.status_code)

print(r.text[-500:])

2 网络爬虫之提取

2.1 Beautiful Soup库的安装

2.1.1 代码实现

import requests

from bs4 import BeautifulSoup

url = "https://python123.io/ws/demo.html"

r = requests.get(url)

demo = r.text

print(r.status_code)

print(demo)

print('--------------------------------------------------')

soup = BeautifulSoup(demo, 'html.parser')

print(soup.prettify())

2.1.2 控制台输出

2.2 Beautiful Soup 库的基本元素

| 基本元素 |

说明 |

| Tag |

标签,最基本的信息组织单元,分别用<></>表明开头和结尾 |

| Name |

标签的名字,

<

p

>

.

.

.

<

/

p

>

<p>...</p>

<p>...</p>的名字是‘p’,格式是.name |

| Attributes |

标签的属性,字典形式组织,格式是.attrs |

| NavigableString |

标签内非属性字符串,

<

>

.

.

.

<

/

>

<>...</>

<>...</>中字符串,格式是.string |

| Comment |

标签内字符串的注释部分,一种特殊的Comment类型 |

2.2.1 代码实现

import requests

from bs4 import BeautifulSoup

url = "https://python123.io/ws/demo.html"

r = requests.get(url)

demo = r.text

print(r.status_code)

# print(demo) # 输出返回对象

print('--------------------------------------------------')

soup = BeautifulSoup(demo, 'html.parser') # 解析返回对象

# print(soup.prettify())

# 查看BeautifulSoup类的基本元素(Tag, name, attrs, NavigableString, Comment)

# Name: .name

print(soup.title)

print(soup.a)

print(soup.a.name)

print(soup.a.parent.name)

print(soup.a.parent.parent.name)

# Attributes: .attrs

print(soup.a.attrs) # {'href': 'http://www.icourse163.org/course/BIT-268001', 'class': ['py1'], 'id': 'link1'}

print(soup.a.attrs['class']) # ['py1']

print(type(soup.a)) # bs4.element.Tag

print(type(soup.a.attrs)) # dict

# NavigableString: .string

print(soup.title.string) # This is a python demo page

print(soup.a.string) # Basic Python

print(soup.p.string) # The demo python introduces several python courses

print(type(soup.p.string))

# Comment: .string

new_soup = BeautifulSoup("<b><!--This is a comment--></b><p>This is not a comment</p>", "html.parser")

print(new_soup.b.name)

print(new_soup.b.string) # This is a comment

print(type(new_soup.b.string)) # <class 'bs4.element.Comment'>

print(new_soup.p.string) # This is not a comment

print(type(new_soup.p.string)) # <class 'bs4.element.NavigableString'>

2.2.2 控制台输出

2.3 基于bs4库的HTML内容遍历方法

- HTML其实是树形结构的文本信息:标签标明了信息结构的逻辑关系

- 根据HTML基本格式,按照从根节点到叶子结点或从叶子节点到根节点或平行节点之间的遍历方式,分为下行遍历、上行遍历和平行遍历三种。

2.3.1 标签树的下行遍历

(1)基本原理

| 属性 |

说明 |

| .contents |

子节点列表,将

<

t

a

g

>

<tag>

<tag>所有儿子节点存入列表 |

| .children |

子节点的迭代类型,与.contents类似,用于循环遍历儿子节点 |

| .descendants |

子孙节点的迭代类型,包含所有子孙节点,用于循环遍历 |

(2)代码实现

import requests

from bs4 import BeautifulSoup

url = "https://python123.io/ws/demo.html"

r = requests.get(url)

demo = r.text

# print(demo)

# 利用美味汤解析所返回的html文件

soup = BeautifulSoup(demo, 'html.parser')

# 下行遍历:1).contents <tag>的儿子节点;2).children <tag>的儿子节点的迭代类型,用于循环遍历儿子节点;

# 3)descendants <tag>的所有子孙节点的迭代类型,包含所有子孙节点,用于循环遍历

# BeautifulSoup 类是标签树的根节点

print(soup.head) # <head><title>This is a python demo page</title></head>

print(soup.head.contents) # [<title>This is a python demo page</title>]

print(soup.body.contents)

print(soup.body.contents[1]) # <p class="title"><b>The demo python introduces several python courses.</b></p>

print(len(soup.body.contents)) # 5

for child in soup.body.children:

print(child) # 遍历儿子节点

for child in soup.body.descendants:

print(child) # 遍历子孙节点

(3)控制台输出

2.3.2 标签数的上行遍历

(1)基本原理

| 属性 |

说明 |

| .parents |

节点的父亲标签 |

| .parents |

节点先辈标签的迭代类型,用于循环遍历先辈节点 |

(2)具体实现

# 上行遍历:1) .parent 节点的父亲标签;2).parents节点先辈标签的迭代标签,用于循环遍历先辈节点

print(soup.title) # <title>This is a python demo page</title>

print(soup.title.parent) # <head><title>This is a python demo page</title></head>

# print(soup.html)

# print("--------------------------")

# print(soup.html.parent) # 不理解:按理来说没有父亲节点应该返回none啊

# print(soup)

# print(soup.parent) # none

print('a:', soup.a)

print(soup.a.parents) # <generator object PageElement.parents at 0x000002ADEE6509E0>

print(type(soup.a.parents)) # <class 'generator'>

for parent in soup.a.parents:

if parent is None:

print(parent)

else:

print(parent.name)

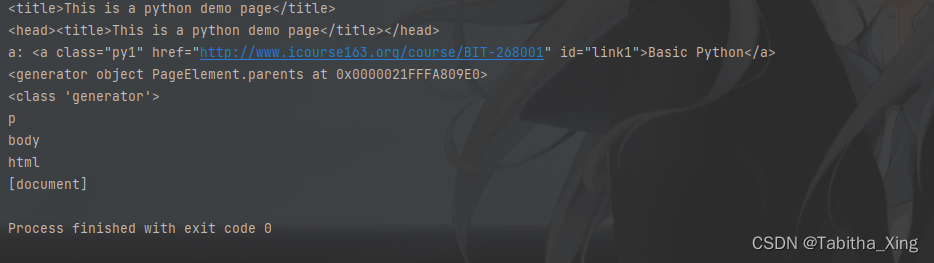

(3)控制台输出

2.3.3 标签树的平行遍历

(1)基本原理

| 属性 |

说明 |

| .next_sibling |

返回按照HTML文本顺序的下一个平行节点标签 |

| .previous_sibling |

返回按照HTML文本顺序的上一个平行节点标签 |

| .next_siblings |

迭代类型,返回按照HTML文本顺序的后续所有平行节点标签 |

| .previous_siblings |

迭代类型,返回按照HTML文本顺序的前续所有平行节点标签 |

(2)代码实现

# 平行遍历:发生在同一个父节点的各个子节点间

print(soup.a.next_sibling)

print(soup.a.next_sibling.next_sibling)

print(soup.a.previous_sibling)

print(soup.a.previous_sibling.previous_sibling)

print(soup.a.parent)

for sibling in soup.a.next_sibling:

print(sibling)

for sibling in soup.a.previous_sibling:

print(sibling)

2.4 信息标记与提取方法

2.4.1 信息标记

- XML: <> …</>

- JSON: 有类型key:value

- YAML: 无类型key:value

2.4.2 信息提取

import requests

from bs4 import BeautifulSoup

import re

r = requests.get("https://python123.io/ws/demo.html")

demo = r.text

# BeautifulSoup:从HTML或XML文件中提取数据的python库,通过转换器实现文档导航、查找和修改文档的方式

# 查找标签:soup.find_all('tag')

# 查找文本:soup.find_all(text='text')

# 根据id查找:soup.find_all(id='tag id')

# 使用正则:soup.find_all(text=re.compile('you re')), soup.find_all(id=re.compile('your re'))

# 指定属性查找标签:soup.find_all('tag',{'id':'tag id', 'class':'tag class'})

soup = BeautifulSoup(demo, 'html.parser')

for link in soup.find_all('a'): # 查找soup中的所有a标签

print(link.get('href'))

print(soup.find_all('a'))

print(soup.find_all(['a', 'b']))

for tag in soup.find_all(True):

print(tag.name)

for tag in soup.find_all(re.compile('b')): # 不是很理解这个正则表达式这句

print(tag.name)

print(soup.find_all('p', 'course'))

print(soup.find_all(id='link1'))

print(soup.find_all(id='link'))

print(soup.find_all(id=re.compile('link')))

print(soup.find_all('a', recursive=False))

print(soup.find_all(string='Basic Python'))

print(soup.find_all(string=re.compile('python')))

# 扩展方法

# <>.find() 搜索且只返回一个结果

# <>.find_parents() 在先辈节点中搜索返回列表类型,同.find_all()参数

# <>.find_parent() 在先辈节点中返回一个结果