算法基础

激活函数

- 激活函数的作用

激活函数把非线性引入了神经网络

- 后面的代码用到的3种激活函数

- relu

r

e

l

u

(

x

)

=

{

x

x

>

=

0

0

x

<

0

relu(x)\;\;=\;\left\{\begin{array}{lc}x&x\;>=\;0\\0&x\;<\;0\end{array}\right.

relu(x)={x0x>=0x<0

d

(

r

e

l

u

(

x

)

)

d

x

=

{

1

x

>

=

0

0

x

<

0

\frac{d(relu(x))}{dx}\;=\;\left\{\begin{array}{lc}1&x\;>=\;0\\0&x\;<\;0\end{array}\right.

dxd(relu(x))={10x>=0x<0

- sigmiod

s

i

g

m

o

i

d

(

x

)

=

1

1

+

e

−

x

sigmoid(x)\;=\;\frac1{1+e^{-x}}

sigmoid(x)=1+e−x1

d

(

s

i

g

m

o

i

d

(

x

)

)

d

x

=

1

(

1

+

e

−

x

)

−

1

(

1

+

e

−

x

)

2

\frac{d(sigmoid(x))}{dx}\;=\;\frac1{(1+e^{-x})}-\frac1{{(1+e^{-x})}^2}

dxd(sigmoid(x))=(1+e−x)1−(1+e−x)21

d

(

s

i

g

m

o

i

d

(

x

)

)

d

x

=

s

i

g

m

o

i

d

(

x

)

∗

(

1

−

s

i

g

m

o

i

d

(

x

)

)

\frac{d(sigmoid(x))}{dx}\;=\;sigmoid(x)\ast(1-sigmoid(x))

dxd(sigmoid(x))=sigmoid(x)∗(1−sigmoid(x))

- softmax

softmax的作用是把一个向量变成一个概率向量,下面用一个

设X = [x1, x2]

s

o

f

t

m

a

x

(

X

)

=

[

e

x

1

e

x

1

+

e

x

2

,

e

x

2

e

x

1

+

e

x

2

]

softmax(X)\;=\;\lbrack\frac{e^{x1}}{e^{x1}+e^{x2}},\;\frac{e^{x2}}{e^{x1}+e^{x2}}\rbrack

softmax(X)=[ex1+ex2ex1,ex1+ex2ex2]

看下式,一个向量对一个向量求导,得到的是一个矩阵,叫做雅克比矩阵

d

(

s

o

f

t

m

a

x

(

X

)

)

d

X

=

[

∂

(

e

x

1

e

x

1

+

e

x

2

)

∂

x

1

∂

(

e

x

2

e

x

1

+

e

x

2

)

∂

x

1

∂

(

e

x

1

e

x

1

+

e

x

2

)

∂

x

2

∂

(

e

x

2

e

x

1

+

e

x

2

)

∂

x

2

]

=

[

e

x

1

∗

e

x

2

(

e

x

1

+

e

x

2

)

2

−

e

x

1

∗

e

x

2

(

e

x

1

+

e

x

2

)

2

−

e

x

1

∗

e

x

2

(

e

x

1

+

e

x

2

)

2

e

x

1

∗

e

x

2

(

e

x

1

+

e

x

2

)

2

]

\frac{d(softmax(X))}{dX}\;=\;\begin{bmatrix}\frac{\partial{\displaystyle(\frac{e^{x1}}{e^{x1}+e^{x2}})}}{\partial x1}&\frac{\partial{\displaystyle(\frac{e^{x2}}{e^{x1}+e^{x2}})}}{\partial x1}\\\frac{\partial{\displaystyle(\frac{e^{x1}}{e^{x1}+e^{x2}})}}{\partial x2}&\frac{\partial{\displaystyle(\frac{e^{x2}}{e^{x1}+e^{x2}})}}{\partial x2}\end{bmatrix}\;=\;\begin{bmatrix}\frac{e^{x1}\ast e^{x2}}{{(e^{x1}\;+\;e^{x2})}^2}&\frac{-e^{x1}\ast e^{x2}}{{(e^{x1}\;+\;e^{x2})}^2}\\\frac{-e^{x1}\ast e^{x2}}{{(e^{x1}\;+\;e^{x2})}^2}&\frac{e^{x1}\ast e^{x2}}{{(e^{x1}\;+\;e^{x2})}^2}\end{bmatrix}

dXd(softmax(X))=⎣⎢⎢⎢⎡∂x1∂(ex1+ex2ex1)∂x2∂(ex1+ex2ex1)∂x1∂(ex1+ex2ex2)∂x2∂(ex1+ex2ex2)⎦⎥⎥⎥⎤=[(ex1+ex2)2ex1∗ex2(ex1+ex2)2−ex1∗ex2(ex1+ex2)2−ex1∗ex2(ex1+ex2)2ex1∗ex2]

d

(

s

o

f

t

m

a

x

(

X

)

)

d

X

=

[

s

o

f

t

m

a

x

(

X

)

1

∗

s

o

f

t

m

a

x

(

X

)

2

−

s

o

f

t

m

a

x

(

X

)

1

∗

s

o

f

t

m

a

x

(

X

)

2

−

s

o

f

t

m

a

x

(

X

)

1

∗

s

o

f

t

m

a

x

(

X

)

2

s

o

f

t

m

a

x

(

X

)

1

∗

s

o

f

t

m

a

x

(

X

)

2

]

\frac{d(softmax(X))}{dX}\;=\;\begin{bmatrix}softmax{(X)}_1\ast softmax{(X)}_2&-softmax{(X)}_1\ast softmax{(X)}_2\\-softmax{(X)}_1\ast softmax{(X)}_2&softmax{(X)}_1\ast softmax{(X)}_2\end{bmatrix}

dXd(softmax(X))=[softmax(X)1∗softmax(X)2−softmax(X)1∗softmax(X)2−softmax(X)1∗softmax(X)2softmax(X)1∗softmax(X)2]

- 可以发现激活函数的导数是很简单的形式,这是因为反向传播的时候是要求导的,因此是故意这样设计的

损失函数

- 损失函数通常作为学习准则与优化问题相联系,即通过最小化损失函数求解和评估模型。通俗点说就是我们在训练集里面拿一堆数据喂给模型,模型会根据这对数据预测出一个结果,这个结果和模型的权重有关,而我们的训练集会有标签,损失函数就是用来表达模型推断出来的结果和标签之间的差距的一个值,这个值越小,代表我们的模型的预测结果和我们的标签越接近,因此我们的优化目标就是要优化我们的权重,让我们的损失函数减小。

- 我下面的示例代码实现的是分类问题,分类问题中有个经典的损失函数,交叉熵,交叉熵可以表达两个概率分布之间的差距,公式如下,下面的X1,X2,都是一个n维的概率向量。

e

r

o

s

s

_

e

n

t

r

o

p

y

(

X

1

,

X

2

)

=

−

∑

i

=

1

n

X

1

i

ln

(

X

2

i

)

eross\_entropy(X1,\;X2)\;=\;-\sum_{i=1}^nX1_i\ln(X2_i)

eross_entropy(X1,X2)=−i=1∑nX1iln(X2i)

我们一般把label赋值给X1, 模型预测值赋值给X2

设X1 = [l1, l2], X2 = [x1, x2]

δ

e

r

o

s

s

_

e

n

t

r

o

p

y

(

X

1

,

X

2

)

δ

X

2

=

[

δ

(

−

l

1

∗

ln

(

x

1

)

−

l

2

∗

ln

(

x

2

)

)

δ

x

1

δ

(

−

l

1

∗

ln

(

x

1

)

−

l

2

∗

ln

(

x

2

)

)

δ

x

2

]

=

[

−

l

1

x

1

−

l

2

x

2

]

\frac{\delta eross\_entropy(X1,\;X2)}{\delta X2}\;=\;\begin{bmatrix}\frac{\delta(-l1\ast\ln(x1)\;-\;l2\ast\ln(x2))}{\delta x1}&\frac{\delta(-l1\ast\ln(x1)\;-\;l2\ast\ln(x2))}{\delta x2}\end{bmatrix}\;=\;\begin{bmatrix}-\frac{l1}{x1}&-\frac{l2}{x2}\end{bmatrix}

δX2δeross_entropy(X1,X2)=[δx1δ(−l1∗ln(x1)−l2∗ln(x2))δx2δ(−l1∗ln(x1)−l2∗ln(x2))]=[−x1l1−x2l2]

如上式,标量对向量求导得到一个向量

因为反向传播的时候也是要对损失函数求导的,所以损失函数的导数的形式也是比较简单的

链式法则

- 上面说的,我们要优化我们的模型参数,使得损失函数尽量减小。有一种优化的方法叫做梯度下降法,还记得梯度的定义吗,梯度的本意是一个向量,表示某一函数在该点处的方向导数沿着该方向取得最大值,即函数在该点处沿着该方向变化最快,变化率最大。所以我们的模型权重就要往梯度的反方向走就可以让我们的loss很快的下降。所以现在优化问题变成了求导问题,还记得高中学的符合函数求导时用的链式法则吗,神经网络一层层前传时就是一个标准的复合函数,区别只是高中时都是标量间的求导,这里是标量对向量,向量对向量的求导。

向量求导

- 这部分比较长,你们可以看我后面的代码,里面用matlab实现了一系列的链式求导的过程。

这里贴上一个大佬的链接

矩阵求导总结链接

代码示例

代码文件结构说明

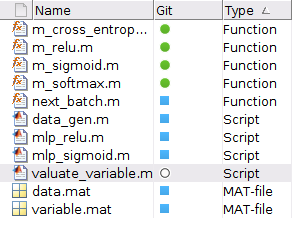

- 这是我的代码的文件结构,分别为5个Function,4个Scrip,2个数据文件

- 运行顺序为

-

运行data_gen.m (再文件夹中生成data.mat,并画出数据集分布,我给出的链接已经有了,这步可跳过)

-

运行mlp_relu.m或mlp_sigmoid.m (两个脚本的功能一样,都是训练一个多层感知机并把模型参数保存到varibale.mat里面,并且画出损失函数的变化趋势,这个文件已经有的话就可以跳过这一步,推荐运行mlp_relu.m,效果比较好)

-

运行valuate_variable.m (检验模型的性能,画出模型的分类结果,可以和data_gen.m生成的数据集做对比)

函数脚本

- m_cross_entropy.m

实现了求两个向量的交叉熵

function [output] = m_cross_entropy(predicts,labels)

predicts_log = -log(predicts);

output = predicts_log.*labels;

output = sum(output, 2);

output = mean(output, 1);

end

function [output] = m_relu(input)

size_input = size(input);

output = input;

for index = 1:size_input(1)*size_input(2)

if input(index) < 0

output(index) = 0;

end

end

end

- m_sigmoid.m

实现了激活函数sigmoid

function [output] = m_sigmoid(input)

output = 1 ./ (1 + exp(-input));

end

- m_softmax.m

实现了激活函数softmax

function [output] = m_softmax(input)

input_exp = exp(input);

total_exp = sum(input_exp, 2);

output = input_exp ./ total_exp;

end

function [data_output,label_output] = next_batch(data_input,label_input,batch_size)

size_input = size(data_input);

array = randperm(size_input(1));

index = array(1:batch_size);

data_output = data_input(index, :);

label_output = label_input(index, :);

end

可运行脚本

- data_gen.m

随机生成数据集并把数据集分布画出来

clear

class1_total = 150;

class2_total = 250;

r1 = 5*rand(1, class1_total);

theta1 = 2*pi*rand(1, class1_total);

r2 = 7+3*rand(1, class2_total);

theta2 = 2*pi*rand(1, class2_total);

x1 = r1.*cos(theta1);

y1 = r1.*sin(theta1);

x2 = r2.*cos(theta2);

y2 = r2.*sin(theta2);

X1 = [x1; y1];

X1 = X1';

X2 = [x2; y2];

X2 = X2';

labels1 = [ones(class1_total, 1), zeros(class1_total, 1)];

labels2 = [zeros(class2_total, 1), ones(class2_total, 1)];

save('data.mat', 'X1', 'X2', 'labels1', 'labels2')

plot(X1(:,1), X1(:,2), '+r', X2(:,1), X2(:,2), '*b')

title("数据集")

- mlp_relu.m

训练一个多层感知机并把模型参数保存到varibale.mat里面,并且画出损失函数的变化趋势,下面是几个核心的部分

clear

% 定义超参数

LearningRate = 0.03;

Batch_size = 128;

LayerInput_nodes = 2;

LayerHide1_nodes = 4;

LayerHide2_nodes = 3;

LayerOutput_nodes = 2;

Steps = 3000;

regulate = 0.001;

loss_array = zeros(1, Steps);

load("data.mat")

% plot(X1(:,1), X1(:,2), '+r', X2(:,1), X2(:,2), '*b')

% 数据集预处理

X_data = [X1; X2];

Y_data = [labels1; labels2];

Weight1 = normrnd(0, 1, LayerInput_nodes, LayerHide1_nodes);

% Biases1 = normrnd(0, 0.001, 1, LayerHide1_nodes);

Biases1 = zeros(1, LayerHide1_nodes) + 0.1;

Weight2 = normrnd(0, 1, LayerHide1_nodes, LayerHide2_nodes);

% Biases2 = normrnd(0, 0.001, 1, LayerHide2_nodes);

Biases2 = zeros(1, LayerHide2_nodes) + 0.1;

Weight3 = normrnd(0, 1, LayerHide2_nodes, LayerOutput_nodes);

% Biases3 = normrnd(0, 0.001, 1, LayerOutput_nodes);

Biases3 = zeros(1, LayerOutput_nodes) + 0.1;

for globel_step = 1:Steps

% 获取本批次的数据集

[x_batch, y_batch] = next_batch(X_data, Y_data, Batch_size);

% Fully Connect Layer1

y_l1 = x_batch * Weight1 + Biases1;

y_a1 = m_relu(y_l1);

% Fully Connect Layer2

y_l2 = y_a1 * Weight2 + Biases2;

y_a2 = m_relu(y_l2);

% Fully Connect Layer3

y_l3 = y_a2 * Weight3 + Biases3;

y_pre = m_softmax(y_l3);

% loss function

loss = m_cross_entropy(y_pre, y_batch);

% record the loss value of each batch

loss_array(globel_step) = loss;

% 对交叉熵函数求导

loss_grad = -y_batch ./ y_pre;

% 定义每一轮的权重和偏置的变化量

Weight1_deta = zeros(size(Weight1));

Biases1_deta = zeros(size(Biases1));

Weight2_deta = zeros(size(Weight2));

Biases2_deta = zeros(size(Biases2));

Weight3_deta = zeros(size(Weight3));

Biases3_deta = zeros(size(Biases3));

% 遍历整个batch

for batch_index = 1:Batch_size

% 对softmax函数求导得到的雅克比矩阵

softmax_jaco = [y_pre(batch_index, 1)*y_pre(batch_index, 2), -y_pre(batch_index, 1)*y_pre(batch_index, 2);

-y_pre(batch_index, 1)*y_pre(batch_index, 2), y_pre(batch_index, 1)*y_pre(batch_index, 2)];

% 累加权重和偏置的梯度

temp_yl3_grad = loss_grad(batch_index, :)*softmax_jaco;

Biases3_deta = Biases3_deta + temp_yl3_grad;

Weight3_deta = Weight3_deta + (y_a2(batch_index, :)' * temp_yl3_grad);

temp_yl2_jaco = eye(size(y_a2, 2));

temp_index_l2 = find(y_l2(batch_index, :) < 0);

temp_yl2_jaco(temp_index_l2, temp_index_l2) = 0;

temp_yl2_grad = temp_yl3_grad * Weight3' * temp_yl2_jaco;

Biases2_deta = Biases2_deta + temp_yl2_grad;

Weight2_deta = Weight2_deta + (y_a1(batch_index, :)' * temp_yl2_grad);

temp_yl1_jaco = eye(size(y_a1, 2));

temp_index_l1 = find(y_l1(batch_index, :) < 0);

temp_yl1_jaco(temp_index_l1, temp_index_l1) = 0;

temp_yl1_grad = temp_yl2_grad * Weight2' * temp_yl1_jaco;

Biases1_deta = Biases1_deta + temp_yl1_grad;

Weight1_deta = Weight1_deta + (x_batch(batch_index, :)' * temp_yl1_grad);

end

% 权重和偏置的变化量为这一批数据的平均值乘以学习率

Weight1_deta = -LearningRate*Weight1_deta/Batch_size;

Biases1_deta = -LearningRate*Biases1_deta/Batch_size;

Weight2_deta = -LearningRate*Weight2_deta/Batch_size;

Biases2_deta = -LearningRate*Biases2_deta/Batch_size;

Weight3_deta = -LearningRate*Weight3_deta/Batch_size;

Biases3_deta = -LearningRate*Biases3_deta/Batch_size;

% 更新权重和偏置

Weight1 = Weight1 + Weight1_deta;

Biases1 = Biases1 + Biases1_deta;

Weight2 = Weight2 + Weight2_deta;

Biases2 = Biases2 + Biases2_deta;

Weight3 = Weight3 + Weight3_deta;

Biases3 = Biases3 + Biases3_deta;

% 正则化

Weight1 = Weight1 - Weight1*regulate;

Biases1 = Biases1 - Biases1*regulate;

Weight2 = Weight2 - Weight2*regulate;

Biases2 = Biases2 - Biases2*regulate;

Weight3 = Weight3 - Weight3*regulate;

Biases3 = Biases3 - Biases3*regulate;

end

save('variable.mat', 'Weight1', 'Biases1', 'Weight2', 'Biases2', 'Weight3', 'Biases3')

plot(loss_array)

title("relu loss value")

- valuate_variable.m

检验模型的性能,画出模型的分类结果,可以和data_gen.m生成的数据集做对比

clear

load('variable.mat')

THRESHOLD = 0.6;

x_input = linspace(-12, 12, 100);

y_input = linspace(-12, 12, 100);

[x_input, y_input] = meshgrid(x_input, y_input);

x_input = reshape(x_input, size(x_input, 1)*size(x_input, 2), 1);

y_input = reshape(y_input, size(y_input, 1)*size(y_input, 2), 1);

input = [x_input, y_input];

y_l1 = input * Weight1 + Biases1;

y_a1 = m_relu(y_l1);

% Fully Connect Layer2

y_l2 = y_a1 * Weight2 + Biases2;

y_a2 = m_relu(y_l2);

% Fully Connect Layer3

y_l3 = y_a2 * Weight3 + Biases3;

y_pre = m_softmax(y_l3);

index1 = find(y_pre(:, 1) > THRESHOLD);

% index1 = find(y_pre(:, 1) >= y_pre(:, 2));

class1 = input(index1, :);

index2 = find(y_pre(:, 2) > THRESHOLD);

% index2 = find(y_pre(:, 1) < y_pre(:, 2));

class2 = input(index2, :);

plot(class1(:, 1), class1(:, 2), '+b', class2(:, 1), class2(:, 2), '*r');

axis equal

title("test")

效果演示

- 运行data_gen.m,以下是生成的数据集,圆内为一类,圆外为另一类

- 运行mlp_relu.m, 以下是损失函数的变化趋势,最后稳定再0.1左右

- 运行valuate_variable.m,以下是模型对平面上点进行分类,可以和上面运行data_gen.m时生成的数据集做对比,模型确实学习到了数据集的特征,成功的把圆内的分成了一类,圆外的分成了一类

代码下载链接

- 下面是我放在CSDN下载里面的代码压缩包,内容为我上面的代码和数据文件

代码压缩包链接

希望可以抛砖引玉,欢迎大家到评论区和我交流