通过对比VINS-Mono与其RGBD版本,分析其改动思路

一、feature_tracker

头文件加入了ros的多传感器时间戳

#include <message_filters/time_synchronizer.h>

#include <message_filters/synchronizer.h>

#include <message_filters/sync_policies/approximate_time.h>

主程序中

ros::Subscriber sub_img = n.subscribe(IMAGE_TOPIC, 100, img_callback);

改为

message_filters::Subscriber<sensor_msgs::Image> sub_image(n, IMAGE_TOPIC, 1);

message_filters::Subscriber<sensor_msgs::Image> sub_depth(n, DEPTH_TOPIC, 1);

typedef message_filters::sync_policies::ApproximateTime<sensor_msgs::Image,sensor_msgs::Image> syncPolicy;

message_filters::Synchronizer<syncPolicy> sync(syncPolicy(10), sub_image, sub_depth);

sync.registerCallback(boost::bind(&img_callback, _1, _2));

参照 ros消息时间同步与回调_kint_zhao的博客-CSDN博客

回调函数也需要修改,接收depth,且此时将img_msg全改为color_msg

void img_callback(const sensor_msgs::ImageConstPtr &img_msg)

void img_callback(const sensor_msgs::ImageConstPtr &color_msg, const sensor_msgs::ImageConstPtr &depth_msg)

增加以下,把depth的ros message也转成cv::Mat,放在image里,创造depth_ptr指向

cv_bridge::CvImageConstPtr depth_ptr;

{

sensor_msgs::Image img;

img.header = depth_msg->header;

img.height = depth_msg->height;

img.width = depth_msg->width;

img.is_bigendian = depth_msg->is_bigendian;

img.step = depth_msg->step;

img.data = depth_msg->data;

img.encoding = sensor_msgs::image_encodings::MONO16;

depth_ptr = cv_bridge::toCvCopy(img, sensor_msgs::image_encodings::MONO16);

}

下面的readImage(),加入depth_ptr

trackerData[i].readImage(ptr->image.rowRange(ROW * i, ROW * (i + 1)), img_msg->header.stamp.toSec());

trackerData[i].readImage(ptr->image.rowRange(ROW * i, ROW * (i + 1)), depth_ptr->image.rowRange(ROW * i, ROW * (i + 1)), color_msg->header.stamp.toSec());

双目才用,可改可不改

trackerData[i].cur_img = ptr->image.rowRange(ROW * i, ROW * (i + 1));

trackerData[i].cur_img = ptr->image.rowRange(ROW * i, ROW * (i + 1));

trackerData[i].cur_depth = depth_ptr->image.rowRange(ROW * i, ROW * (i + 1));

经过readImage()操作以后,prev-cur-forw帧所有的数据都基本上获取了也对应上了,然后就需要封装到sensor_msgs发布出去,由vins_estimator_node和rviz接收,分别进行后端操作和可视化。

发布过程中,生成feature_points的过程:给后端喂数据时,增加封装depth为sensor_msgs

if (PUB_THIS_FRAME)

{

cv::Mat show_depth = depth_ptr->image;

...

sensor_msgs::ChannelFloat32 velocity_x_of_point;

sensor_msgs::ChannelFloat32 velocity_y_of_point;

sensor_msgs::ChannelFloat32 depth_of_point;

利用这个ros消息的格式进行信息存储,增加depth

向depth_of_point.values添加像素2D点(u,v)的深度信息

for (int i = 0; i < NUM_OF_CAM; i++)

...

velocity_x_of_point.values.push_back(pts_velocity[j].x);

velocity_y_of_point.values.push_back(pts_velocity[j].y);

depth_of_point.values.push_back((int)show_depth.at<unsigned short>(round(cur_pts[j].y), round(cur_pts[j].x)));

将特征点id,矫正后归一化平面的3D点(x,y,z=1),像素2D点(u,v),像素的速度(vx,vy),和深度depth封装成sensor_msgs::PointCloudPtr类型的feature_points实例中,发布到pub_img

feature_points->channels.push_back(velocity_x_of_point);

feature_points->channels.push_back(velocity_y_of_point);

feature_points->channels.push_back(depth_of_point);

从头文件可以看到,改了readImage()和 增加了特征点的depth信息

void readImage(const cv::Mat &_img, const cv::Mat &_depth, double _cur_time);

...

cv::Mat prev_depth, cur_depth, forw_depth;

接下来看readImage()具体加了什么

如果第一次输入图像,prev_img这个没用,prev_depth也是

if (forw_img.empty())

{

prev_img = cur_img = forw_img = img;

prev_depth = cur_depth = forw_depth = _depth;

}

else

{

forw_img = img;

forw_depth = _depth;

}

到函数末尾,所有特征点去畸变,计算速度之后,往前走,准备接收新帧,depth跟着走

prev_img = cur_img;

prev_depth = cur_depth;

prev_pts = cur_pts;

prev_un_pts = cur_un_pts;

cur_img = forw_img;

cur_depth = forw_depth;

cur_pts = forw_pts;

undistortedPoints();

prev_time = cur_time;

增加了深度话题,feature_tracker_node.cpp调用

extern std::string DEPTH_TOPIC;

fsSettings["depth_topic"] >> DEPTH_TOPIC;

二、 vins-estimator

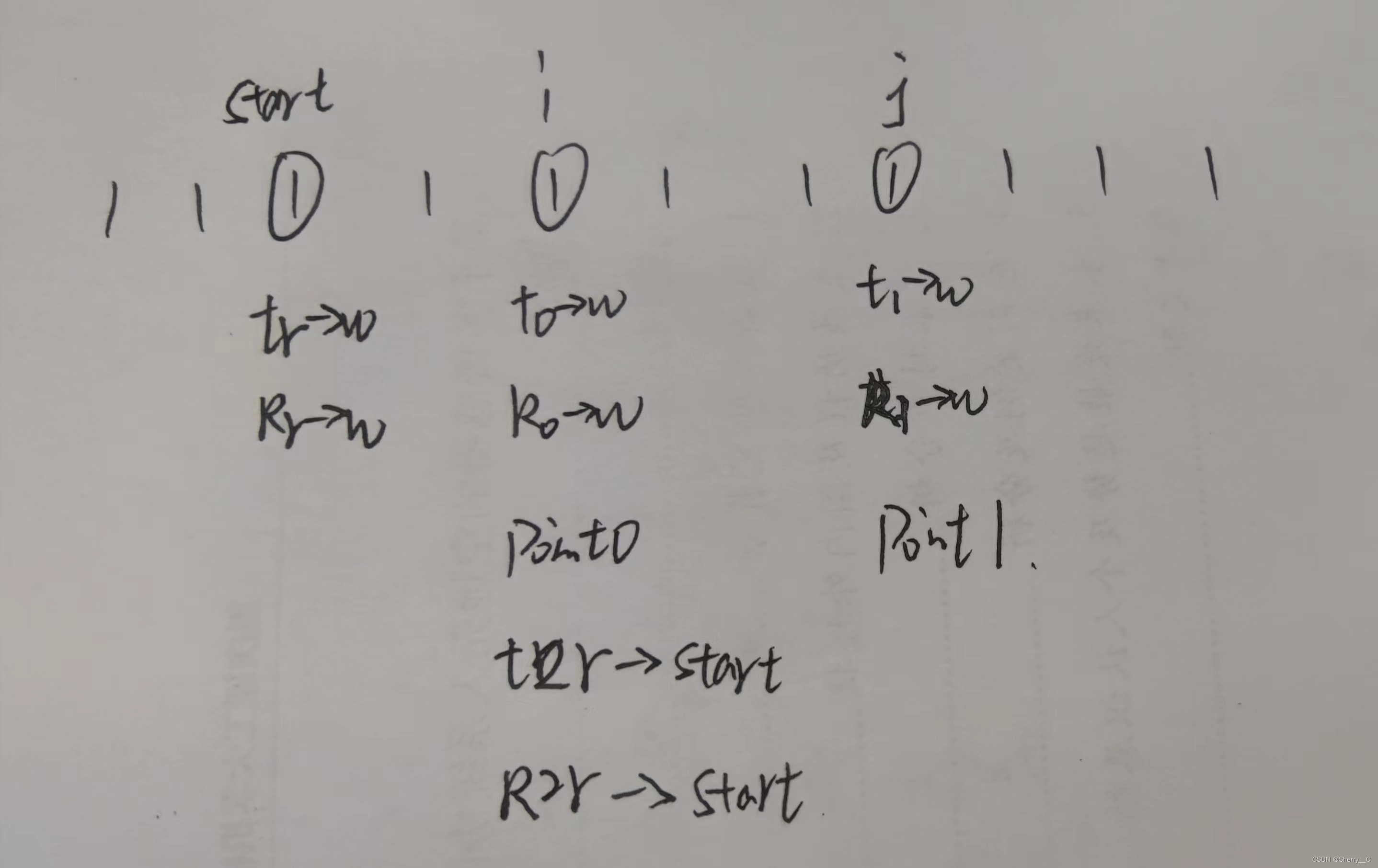

void process() ,开启一个VIO 线程,没有回环部分

把每张图像中 所有特征点 信息取出来放在map容器 image里去,然后进行 estimator.processImage(image, img_msg->header),把深度信息也放到里面去,增加1维

map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> image;

map<int, vector<pair<int, Eigen::Matrix<double, 8, 1>>>> image;

double velocity_x = img_msg->channels[3].values[i];

double velocity_y = img_msg->channels[4].values[i];

double depth = img_msg->channels[5].values[i] / 1000.0;

emplace_back到image容器时也要改

Eigen::Matrix<double, 8, 1> xyz_uv_velocity_depth;

xyz_uv_velocity_depth << x, y, z, p_u, p_v, velocity_x, velocity_y, depth;

image[feature_id].emplace_back(camera_id, xyz_uv_velocity_depth);

这些信息都是在feature_tracker_node.cpp中获得

增加李代数相关的sophus库

#include <sophus/se3.h>

#include <sophus/so3.h>

using Sophus::SE3;

using Sophus::SO3;

用于判断是否为关键帧的processImage(),加入深度阈值判断

void processImage(const map<int, vector<pair<int, Eigen::Matrix<double, 8, 1>>>> &image, const std_msgs::Header &header);

仿照visualInitialAlign(),visualInitialAlignWithDepth()

bool visualInitialAlignWithDepth();

initialStructure()

在用来后续做sfm的 SFMFeature tmp_feature中,SFMFeature结构体增加observation_depth(initial_sfm.h),来储存深度,把当前特征点的深度和当前帧的编号imu_j配对

tmp_feature.observation.push_back(make_pair(imu_j, Eigen::Vector2d{pts_j.x(), pts_j.y()}));

tmp_feature.observation.push_back(make_pair(imu_j, Eigen::Vector2d{pts_j.x(), pts_j.y()}));

tmp_feature.observation_depth.push_back(make_pair(imu_j, it_per_frame.depth));

初始化函数用改之后的visualInitialAlignWithDepth()

if (visualInitialAlign())

if (visualInitialAlignWithDepth())

bool Estimator::visualInitialAlign()也改了,

???为什么,不是已经改用visualInitialAlignWithDepth()来初始化了么

f_manager.triangulate(Ps, &(TIC_TMP[0]), &(RIC[0]));

f_manager.triangulateWithDepth(Ps, &(TIC_TMP[0]), &(RIC[0]));

visualInitialAlignWithDepth()对于visualInitialAlign()的改动:

改用新的triangulateWithDepth()来三角化特征点,得到深度

f_manager.triangulate(Ps, &(TIC_TMP[0]), &(RIC[0]));

f_manager.triangulateWithDepth(Ps, &(TIC_TMP[0]), &(RIC[0]));

删去了假设的尺度因子s,因此此时的深度已经获得

double s = (x.tail<1>())(0);

对应的,在把所有的平移对齐到滑窗中的第0帧时,删去s

???为什么要对齐

Ps[i] = s * Ps[i] - Rs[i] * TIC[0] - (s * Ps[0] - Rs[0] * TIC[0]);

Ps[i] = Ps[i] - Rs[i] * TIC[0] - (Ps[0] - Rs[0] * TIC[0]);

删去“把尺度模糊的3d点恢复到真实尺度下”这一步

for (auto &it_per_id : f_manager.feature)

{

it_per_id.used_num = it_per_id.feature_per_frame.size();

if (!(it_per_id.used_num >= 2 && it_per_id.start_frame < WINDOW_SIZE - 2))

continue;

it_per_id.estimated_depth *= s;

}

bool Estimator::relativePose()中,

把同时被frame_count_l frame_count_r帧看到的特征点在各自的像素坐标,返回给corres时,用新的getCorrespondingWithDepth()

corres = f_manager.getCorresponding(i, WINDOW_SIZE);

corres = f_manager.getCorrespondingWithDepth(i, WINDOW_SIZE);

用于计算视差的pts_0(u0,v0);pts_1(u1,v1);改为 pts_0(u0/z0,v0/z0);pts_1(u1/z1,v1/z1)

Vector2d pts_0(corres[j].first(0), corres[j].first(1));

Vector2d pts_1(corres[j].second(0), corres[j].second(1));

Vector2d pts_0(corres[j].first(0)/corres[j].first(2), corres[j].first(1)/corres[j].first(2));

Vector2d pts_1(corres[j].second(0)/corres[j].second(2), corres[j].second(1)/corres[j].second(2));

原本使用2D-2D的E矩阵(对极几何)恢复当前帧到枢纽帧的R、T,现在改用PNP。因为现在有depth(放在corres[?].second(2)),是3D-2D

if(average_parallax * 460 > 30 && m_estimator.solveRelativeRT(corres, relative_R, relative_T))

if(average_parallax * 460 > 30 && m_estimator.solveRelativeRT_PNP(corres, relative_R, relative_T))

solveOdometry()中,

把应该三角化但是没有三角化的特征点三角化时,加一步triangulateWithDepth(),对于深度传感器探测范围以外的点,采用原有的三角化方法triangulate()

f_manager.triangulate(Ps, tic, ric);

f_manager.triangulateWithDepth(Ps, tic, ric);

f_manager.triangulate(Ps, tic, ric);

其中的optimization()(借助ceres进行非线性优化)中,

在定义待优化的参数块,类似g2o的顶点时,不管带不带有传感器时间延时,加入约束的变量(该特征点的第一个观测帧以及其他一个观测帧,加上外参和特征点逆深度)的参数块之后,在有输入深度信息的情况下,设定特征点深度para_Feature在优化过程中不可变

if (ESTIMATE_TD)

{

ProjectionTdFactor *f_td = new ProjectionTdFactor(pts_i, pts_j, it_per_id.feature_per_frame[0].velocity, it_per_frame.velocity,

it_per_id.feature_per_frame[0].cur_td, it_per_frame.cur_td,

it_per_id.feature_per_frame[0].uv.y(), it_per_frame.uv.y());

problem.AddResidualBlock(f_td, loss_function, para_Pose[imu_i], para_Pose[imu_j], para_Ex_Pose[0], para_Feature[feature_index], para_Td[0]);

}

else

{

ProjectionFactor *f = new ProjectionFactor(pts_i, pts_j);

problem.AddResidualBlock(f, loss_function, para_Pose[imu_i], para_Pose[imu_j], para_Ex_Pose[0], para_Feature[feature_index]);

}

if (ESTIMATE_TD)

{

ProjectionTdFactor *f_td = new ProjectionTdFactor(pts_i, pts_j, it_per_id.feature_per_frame[0].velocity, it_per_frame.velocity,

it_per_id.feature_per_frame[0].cur_td, it_per_frame.cur_td,

it_per_id.feature_per_frame[0].uv.y(), it_per_frame.uv.y());

problem.AddResidualBlock(f_td, loss_function, para_Pose[imu_i], para_Pose[imu_j], para_Ex_Pose[0], para_Feature[feature_index], para_Td[0]);

if (it_per_id.estimate_flag == 1)

problem.SetParameterBlockConstant(para_Feature[feature_index]);

}

else

{

ProjectionFactor *f = new ProjectionFactor(pts_i, pts_j);

problem.AddResidualBlock(f, loss_function, para_Pose[imu_i], para_Pose[imu_j], para_Ex_Pose[0], para_Feature[feature_index]);

if (it_per_id.estimate_flag == 1)

problem.SetParameterBlockConstant(para_Feature[feature_index]);

}

FeaturePerFrame(每一个特征点的在一张图像中属性 point)中,加上1维存放depth

FeaturePerFrame(const Eigen::Matrix<double, 7, 1> &_point, double td)

FeaturePerFrame(const Eigen::Matrix<double, 8, 1> &_point, double td)

...

depth = _point(7);

...

double depth;

FeaturePerId,也加上测量depth,和是否外部输入depth标志

double measured_depth;

int estimate_flag;//0 initial; 1 by depth image; 2 by triangulate

FeaturePerId(int _feature_id, int _start_frame)

: feature_id(_feature_id), start_frame(_start_frame),

used_num(0), estimated_depth(-1.0), solve_flag(0)

{

}

FeaturePerId(int _feature_id, int _start_frame, double _measured_depth)

: feature_id(_feature_id), start_frame(_start_frame),

used_num(0), estimated_depth(-1.0), measured_depth(_measured_depth), solve_flag(0),estimate_flag(0)

{

}

FeatureManager中,

addFeatureCheckParallax()(特征点进入时检查视差,是否为关键帧)加入depth

getCorresponding()(获得前后两帧之间匹配特征点3D坐标)改用新的

triangulate()(特征点三角化求深度(SVD分解))改用新的

bool addFeatureCheckParallax(int frame_count, const map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> &image, double td);

...

vector<pair<Vector3d, Vector3d>> getCorresponding(int frame_count_l, int frame_count_r);

...

void triangulate(Vector3d Ps[], Vector3d tic[], Matrix3d ric[]);

bool addFeatureCheckParallax(int frame_count, const map<int, vector<pair<int, Eigen::Matrix<double, 8, 1>>>> &image, double td);

...

vector<pair<Vector3d, Vector3d>> getCorrespondingWithDepth(int frame_count_l, int frame_count_r);

...

void triangulateWithDepth(Vector3d Ps[], Vector3d tic[], Matrix3d ric[]);

feature_manager.h定义了3个新函数,用来代替原本的

bool addFeatureCheckParallax(int frame_count, const map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> &image, double td);

...

vector<pair<Vector3d, Vector3d>> getCorresponding(int frame_count_l, int frame_count_r);

...

void triangulate(Vector3d Ps[], Vector3d tic[], Matrix3d ric[]);

bool addFeatureCheckParallax(int frame_count, const map<int, vector<pair<int, Eigen::Matrix<double, 8, 1>>>> &image, double td);

...

vector<pair<Vector3d, Vector3d>> getCorrespondingWithDepth(int frame_count_l, int frame_count_r);

...

void triangulateWithDepth(Vector3d Ps[], Vector3d tic[], Matrix3d ric[]);

这里定义了上面三个新函数

bool FeatureManager::addFeatureCheckParallax(),(加特征点信息,同时检查上一帧是否时关键帧的函数 ),从FeaturePerId里提取新建特征点id和该特征点在滑窗中的当前位置(作为这个特征点的起始位置)时,加上1维提取、存放depth

bool FeatureManager::addFeatureCheckParallax(int frame_count, const map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>> &image, double td)

...

feature.push_back(FeaturePerId(feature_id, frame_count));

bool FeatureManager::addFeatureCheckParallax(int frame_count, const map<int, vector<pair<int, Eigen::Matrix<double, 8, 1>>>> &image, double td)

...

feature.push_back(FeaturePerId(feature_id, frame_count, id_pts.second[0].second(7)));

新的getCorresponding(),(得到同时被frame_count_l frame_count_r帧看到的特征点在各自的坐标),在获得在feature_per_frame中的索引(当前帧-第一次观测到特征点的帧数),最后配对放入corres,之间插入深度值判断(用来剔除异常特征点),然后将坐标乘回depth,得到像素坐标(u,v)形式

double depth_a = it.feature_per_frame[idx_l].depth;

if (depth_a < 0.1 || depth_a > 10)//max and min measurement

continue;

double depth_b = it.feature_per_frame[idx_r].depth;

if (depth_b < 0.1 || depth_b > 10)//max and min measurement

continue;

...

a = a * depth_a;

b = b * depth_b;

旧的triangulate(),末尾加上,将自己设定的estimate_flag=2(表示此时得到的深度值是三角化得来),以及深度值判断,不符合阈值则用深度测量模式。estimator.cpp里优先用新的,再用旧的(对于深度传感器探测范围以外的点,采用原有的三角化方法triangulate()),反正都是循环着后端求解位姿

it_per_id.estimate_flag = 2;

if (it_per_id.estimated_depth < 0.1)

{

it_per_id.estimated_depth = INIT_DEPTH;

it_per_id.estimate_flag = 0;

新的triangulateWithDepth(),大改。

原本的imu_i(特征点开始帧)改用start_frame表示,imu_j改用start_frame+j表示,位姿转换的代号变一下(?感觉像水创新点)。删去ROS的单目相机判断,删去SVD恢复深度值的相关代码行,引入verified_depths来储存右帧的depth,得到start_frame+i的相机坐标系位姿时加入深度值判断。

右帧的判断方式全改:从start帧开始,由i帧3D坐标乘i、j帧间的变换矩阵得到的j帧3D坐标---再转2D坐标,若与从特征点容器取得的j帧2D坐标,的残差合格,则i帧取为右帧。?应该是增加定位精度,剔除不合格的右帧,保证深度值正常

第一个观察到这个特征点的相机坐标系下的深度值=各个能看到该特征点的深度值平均

void FeatureManager::triangulateWithDepth(Vector3d Ps[], Vector3d tic[], Matrix3d ric[])

{

for (auto &it_per_id : feature)

{

it_per_id.used_num = it_per_id.feature_per_frame.size();

if (!(it_per_id.used_num >= 2 && it_per_id.start_frame < WINDOW_SIZE - 2))

continue;

if (it_per_id.estimated_depth > 0)

continue;

int start_frame = it_per_id.start_frame;//原本的imu-i

vector<double> verified_depths;//储存右帧的深度容器

Eigen::Vector3d tr = Ps[start_frame] + Rs[start_frame] * tic[0];

Eigen::Matrix3d Rr = Rs[start_frame] * ric[0]; //第一个观察到这个特征点的KF的位姿

for (int i=0; i < (int)it_per_id.feature_per_frame.size(); i++)

{

Eigen::Vector3d t0 = Ps[start_frame+i] + Rs[start_frame+i] * tic[0];

Eigen::Matrix3d R0 = Rs[start_frame+i] * ric[0];// 得到该KF(左帧)的相机坐标系位姿

double depth_threshold = 3; //for handheld and wheeled application. Since d435i <3 is quiet acc

//double depth_threshold = 10; //for tracked application, since IMU quite noisy in this scene

if (it_per_id.feature_per_frame[i].depth < 0.1 || it_per_id.feature_per_frame[i].depth >depth_threshold)

continue;

Eigen::Vector3d point0(it_per_id.feature_per_frame[i].point * it_per_id.feature_per_frame[i].depth);//start_frame+i帧的该特征点的3D坐标,(feature_per_frame[0]=start_frame)

// transform to reference frame

// T_w_cj -> T_c0_cj

Eigen::Vector3d t2r = Rr.transpose() * (t0 - tr);

Eigen::Matrix3d R2r = Rr.transpose() * R0; // start_frame+i帧相对于世界的 转化 为start_frame+i帧与start_frame帧的相对位姿变换

for (int j=0; j<(int)it_per_id.feature_per_frame.size(); j++)

{

if (i==j)

continue;

Eigen::Vector3d t1 = Ps[start_frame+j] + Rs[start_frame+j] * tic[0];

Eigen::Matrix3d R1 = Rs[start_frame+j] * ric[0];// 得到start_frame+j帧的相机坐标系位姿

Eigen::Vector3d t20 = R0.transpose() * (t1 - t0);

Eigen::Matrix3d R20 = R0.transpose() * R1; // start_frame+j帧相对于世界的 转化 为start_frame+j帧与start_frame+i帧的相对位姿变换

Eigen::Vector3d point1_projected = R20.transpose() * point0 - R20.transpose() * t20;//start_frame+j帧的该特征点的3D坐标,由start_frame+i的3D坐标推得

Eigen::Vector2d point1_2d(it_per_id.feature_per_frame[j].point.x(), it_per_id.feature_per_frame[j].point.y());//start_frame+j帧的该特征点的2D坐标(u,v),从特征点容器直接取

Eigen::Vector2d residual = point1_2d - Vector2d(point1_projected.x() / point1_projected.z(), point1_projected.y() / point1_projected.z());//该特征点的残差,特征点容器取得的坐标-ij标转换得到的坐标

if (residual.norm() < 10.0 / 460) {//this can also be adjust to improve performance

//残差合格才代表start_frame+i、start_frame+j间的变换矩阵没问题,右帧的特征点3D坐标由start_frame+i推得,储存其3D坐标,也变相剔除了j《i的情况

Eigen::Vector3d point_r = R2r * point0 + t2r;

verified_depths.push_back(point_r.z());//储存右帧的深度值

}

}

}

if (verified_depths.size() == 0)

continue;

double depth_sum = std::accumulate(std::begin(verified_depths),std::end(verified_depths),0.0);

double depth_ave = depth_sum / verified_depths.size();

it_per_id.estimated_depth = depth_ave;//第一个观察到这个特征点的相机坐标系下的深度值=各个能看到该特征点的深度值平均

it_per_id.estimate_flag = 1;//设深度值获得方式为深度图

if (it_per_id.estimated_depth < 0.1)

{

it_per_id.estimated_depth = INIT_DEPTH;// 具体太近就设置成默认值

it_per_id.estimate_flag = 0;

}

}

}

void readParameters(ros::NodeHandle &n)中,

增加了若无轨迹输出文件夹,则新建

(这里应该是)

std::string OUTPUT_PATH;

fsSettings["output_path"] >> OUTPUT_PATH;

VINS_RESULT_PATH = OUTPUT_PATH + "/vins_result_no_loop.csv";

std::cout << "result path " << VINS_RESULT_PATH << std::endl;

// create folder if not exists

FileSystemHelper::createDirectoryIfNotExists(OUTPUT_PATH.c_str());

但是,在utility.h里没有定义class FileSystemHelper,而是后续的mono加上了

#include <sys/types.h>

#include <sys/stat.h>

#include <libgen.h>

#include <stdio.h>

#include <stdlib.h>

...

class FileSystemHelper

{

public:

/******************************************************************************

* Recursively create directory if `path` not exists.

* Return 0 if success.

*****************************************************************************/

static int createDirectoryIfNotExists(const char *path)

{

struct stat info;

int statRC = stat(path, &info);

if( statRC != 0 )

{

if (errno == ENOENT)

{

printf("%s not exists, trying to create it \n", path);

if (! createDirectoryIfNotExists(dirname(strdupa(path))))

{

if (mkdir(path, S_IRWXU | S_IRWXG | S_IROTH | S_IXOTH) != 0)

{

fprintf(stderr, "Failed to create folder %s \n", path);

return 1;

}

else

return 0;

}

else

return 1;

} // directory not exists

if (errno == ENOTDIR)

{

fprintf(stderr, "%s is not a directory path \n", path);

return 1;

} // something in path prefix is not a dir

return 1;

}

return ( info.st_mode & S_IFDIR ) ? 0 : 1;

}

};

添加64浮点数

#include <std_msgs/Float64.h>

Keyframe、PointCloud的pub函数加入depth。pubOdometry()加入pub_delta_p、pub_delta_v、pub_delta_t,来表示???

待补

class ImageFrame中,实例化对象和map都加上1维存放depth

ImageFrame(const map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>>>>& _points, double _t):t{_t},is_key_frame{false}

...

map<int, vector<pair<int, Eigen::Matrix<double, 7, 1>> > > points;

ImageFrame(const map<int, vector<pair<int, Eigen::Matrix<double, 8, 1>>>>& _points, double _t):t{_t},is_key_frame{false}

...

map<int, vector<pair<int, Eigen::Matrix<double, 8, 1>> > > points;

struct SFMFeature中,加入

vector<pair<int,double>> observation_depth;

class GlobalSFM中,加入

void triangulateTwoFramesWithDepth(int frame0, Eigen::Matrix<double, 3, 4> &Pose0,

int frame1, Eigen::Matrix<double, 3, 4> &Pose1,

vector<SFMFeature> &sfm_f);

class MotionEstimator中,加入...用来计算3D-2D相机运动得到RT(ICP好像没用上?)

bool solveRelativeRT_ICP (vector<pair<Vector3d, Vector3d>> &corres, Matrix3d &R, Vector3d &T);

bool solveRelativeRT_PNP(const vector<pair<Vector3d, Vector3d>> &corres, Matrix3d &Rotation, Vector3d &Translation);

添加新的RefineGravity(),

该函数的目的是,迭代改善重力g的方向。

n_state表示Ax=b中,A的维度。all_image_frame 保存了每张图像关键帧的位姿,预积分量和关于角点的信息,3维。重力向量参数化,自由度为2。最后1维原本是深度值。

tmp_A(6,9) = [-I*dt 0 (R^bk_c0)*dt*dt*b/2 (R^bk_c0)*((p^c0_ck+1)-(p^c0_ck)) ]

[ -I (R^bk_c0)*(R^c0_bk+1) (R^bk_c0)*dt*b 0 ]

新的tmp_A,删去了(R^bk_c0)*((p^c0_ck+1)-(p^c0_ck))。(???因为这一列对应的状态量是尺度s,而我们的深度值已知,不需要优化s了)

tmp_b(6,1) = [ (a^bk_bk+1)+(R^bk_c0)*(R^c0_bk+1)*p^b_c-p^b_c - (R^bk_c0)*dt*dt*||g||*(g^-)/2 , (b^bk_bk+1)-(R^bk_c0)dt*||g||*(g^-)]^T

新的tmp_b,原本的 (a^bk_bk+1)+(R^bk_c0)*(R^c0_bk+1)*p^b_c-p^b_c - (R^bk_c0)*dt*dt*||g||*(g^-)/2之后,减去(R^bk_c0)*((p^c0_ck+1)-(p^c0_ck))。(???

A删去的同时,b也要减)

???下面是EKF常规做法

int n_state = all_frame_count * 3 + 2 + 1;

...

MatrixXd tmp_A(6, 9);

...

tmp_A.block<3, 1>(0, 8) = frame_i->second.R.transpose() * (frame_j->second.T - frame_i->second.T) / 100.0;

tmp_b.block<3, 1>(0, 0) = frame_j->second.pre_integration->delta_p + frame_i->second.R.transpose() * frame_j->second.R * TIC[0] - TIC[0] - frame_i->second.R.transpose() * dt * dt / 2 * g0;

...

A.bottomRightCorner<3, 3>() += r_A.bottomRightCorner<3, 3>();

b.tail<3>() += r_b.tail<3>();

A.block<6, 3>(i * 3, n_state - 3) += r_A.topRightCorner<6, 3>();

A.block<3, 6>(n_state - 3, i * 3) += r_A.bottomLeftCorner<3, 6>();

...

VectorXd dg = x.segment<2>(n_state - 3);

int n_state = all_frame_count * 3 + 2;

...

MatrixXd tmp_A(6, 8);

...

tmp_b.block<3, 1>(0, 0) = frame_j->second.pre_integration->delta_p + frame_i->second.R.transpose() * frame_j->second.R * TIC[0] - TIC[0] - frame_i->second.R.transpose() * dt * dt / 2 * g0 - frame_i->second.R.transpose() * (frame_j->second.T - frame_i->second.T);

...

A.bottomRightCorner<2, 2>() += r_A.bottomRightCorner<2, 2>();

b.tail<2>() += r_b.tail<2>();

A.block<6, 2>(i * 3, n_state - 2) += r_A.topRightCorner<6, 2>();

A.block<2, 6>(n_state - 2, i * 3) += r_A.bottomLeftCorner<2, 6>();

...

VectorXd dg = x.segment<2>(n_state - 2);

添加新的LinearAlignment(),

该函数的目的是,求解各帧的速度,枢纽帧的重力方向,以及尺度

改动和RefineGravity()类似,去掉尺度相关的维度、J矩阵

int n_state = all_frame_count * 3 + 3 + 1;

...

MatrixXd tmp_A(6, 10);

...

tmp_A.block<3, 1>(0, 9) = frame_i->second.R.transpose() * (frame_j->second.T - frame_i->second.T) / 100.0;

tmp_b.block<3, 1>(0, 0) = frame_j->second.pre_integration->delta_p + frame_i->second.R.transpose() * frame_j->second.R * TIC[0] - TIC[0];

...

A.bottomRightCorner<4, 4>() += r_A.bottomRightCorner<4, 4>();

b.tail<4>() += r_b.tail<4>();

A.block<6, 4>(i * 3, n_state - 4) += r_A.topRightCorner<6, 4>();

A.block<4, 6>(n_state - 4, i * 3) += r_A.bottomLeftCorner<4, 6>();

...

double s = x(n_state - 1) / 100.0;

ROS_DEBUG("estimated scale: %f", s);

g = x.segment<3>(n_state - 4);

...

if(fabs(g.norm() - G.norm()) > 1.0 || s < 0)

...

RefineGravity(all_image_frame, g, x);

s = (x.tail<1>())(0) / 100.0;

(x.tail<1>())(0) = s;

ROS_DEBUG_STREAM(" refine " << g.norm() << " " << g.transpose());

if(s < 0.0 )

return false;

else

return true;

int n_state = all_frame_count * 3 + 3;//no scale now

...

MatrixXd tmp_A(6, 9);//no scale now

...

tmp_b.block<3, 1>(0, 0) = frame_j->second.pre_integration->delta_p + frame_i->second.R.transpose() * frame_j->second.R * TIC[0] - TIC[0] - frame_i->second.R.transpose() * (frame_j->second.T - frame_i->second.T);

...

A.bottomRightCorner<3, 3>() += r_A.bottomRightCorner<3, 3>();

b.tail<3>() += r_b.tail<3>();

A.block<6, 3>(i * 3, n_state - 3) += r_A.topRightCorner<6, 3>();

A.block<3, 6>(n_state - 3, i * 3) += r_A.bottomLeftCorner<3, 6>();

...

g = x.segment<3>(n_state - 3);

...

if(fabs(g.norm() - G.norm()) > 1.0)

...

RefineGravityWithDepth(all_image_frame, g, x);

ROS_DEBUG_STREAM(" refine " << g.norm() << " " << g.transpose());

VisualIMUAlignment()改用新的LinearAlignment()

if(LinearAlignment(all_image_frame, g, x))

if(LinearAlignmentWithDepth(all_image_frame, g, x))

新的triangulateTwoFrames(),

该函数的目的是,根据两帧索引和位姿计算对应特征点的三角化位置。

取出Pose0、Pose1的旋转、平移,后续用来三角化出point0的point_3d,和由point_3d推的point1_reprojected

取出在该帧的观测:point0改为三维,加入depth,定义的时候u,v乘回depth。因为储存在observation_depth里的时候就是(u/depth,v/depth,depth)。

加个深度阈值判断。

如果都能被两帧看到,三角化该特征点:此时不用triangulatePoint()了,改成用point0的2D坐标投回3D坐标,再由两帧的相对R,T推到point1的3D坐标。因此定义残差residual,(从observation取的point1-point_3d推的point1_reprojected),来保证point_3d的正确性。此后9得到左帧的point_3d

(???为了增加三角化的可靠性,剔除太离谱的特征点)

assert(frame0 != frame1);

...

Vector2d point0;

...

for (int k = 0; k < (int)sfm_f[j].observation.size(); k++)

{

...

point0 = sfm_f[j].observation[k].second;

...

Vector3d point_3d;

triangulatePoint(Pose0, Pose1, point0, point1, point_3d);

assert(frame0 != frame1);

Matrix3d Pose0_R = Pose0.block< 3,3 >(0,0);

Matrix3d Pose1_R = Pose1.block< 3,3 >(0,0);

Vector3d Pose0_t = Pose0.block< 3,1 >(0,3);

Vector3d Pose1_t = Pose1.block< 3,1 >(0,3);

...

Vector3d point0;

...

for (int k = 0; k < (int)sfm_f[j].observation.size(); k++)

{

if (sfm_f[j].observation_depth[k].second < 0.1 || sfm_f[j].observation_depth[k].second >10) //max and min measurement

continue;

...

point0 = Vector3d(sfm_f[j].observation[k].second.x()*sfm_f[j].observation_depth[k].second,sfm_f[j].observation[k].second.y()*sfm_f[j].observation_depth[k].second,sfm_f[j].observation_depth[k].second);

...

Vector2d residual;

Vector3d point_3d, point1_reprojected;

point_3d = Pose0_R.transpose()*point0 - Pose0_R.transpose()*Pose0_t;//shan add:this is point in world;

point1_reprojected = Pose1_R*point_3d+Pose1_t;

residual = point1 - Vector2d(point1_reprojected.x()/point1_reprojected.z(),point1_reprojected.y()/point1_reprojected.z());

if (residual.norm() < 1.0/460){

sfm_f[j].state = true;

sfm_f[j].position[0] = point_3d(0);

sfm_f[j].position[1] = point_3d(1);

sfm_f[j].position[2] = point_3d(2);

}

construct()中,

该函数的目的是,对窗口中每个图像帧求解sfm问题,得到所有图像帧相对于参考帧C0的旋转四元数Q、平移向量T和特征点3d坐标

前面的三角化改用新的triangulateTwoFramesWithDepth(),即参考帧+关键帧、参考帧之后每一帧+关键帧、参考帧+参考帧之后每一帧(不包括当前帧)、参考帧之前的每一帧+参考帧

处理到三角化其他没有被三角化的特征点时,套用新的triangulateTwoFramesWithDepth()部分

triangulateTwoFrames(i, Pose[i], frame_num - 1, Pose[frame_num - 1], sfm_f);

...

triangulateTwoFrames(l, Pose[l], i, Pose[i], sfm_f);

...

triangulateTwoFrames(i, Pose[i], l, Pose[l], sfm_f);

...

Vector2d point0, point1;

int frame_0 = sfm_f[j].observation[0].first;

point0 = sfm_f[j].observation[0].second;

int frame_1 = sfm_f[j].observation.back().first;

point1 = sfm_f[j].observation.back().second;

Vector3d point_3d;

triangulatePoint(Pose[frame_0], Pose[frame_1], point0, point1, point_3d);

triangulateTwoFramesWithDepth(i, Pose[i], frame_num - 1, Pose[frame_num - 1], sfm_f);

...

triangulateTwoFramesWithDepth(l, Pose[l], i, Pose[i], sfm_f);

...

triangulateTwoFramesWithDepth(i, Pose[i], l, Pose[l], sfm_f);

...

Vector3d point0;

Vector2d point1;

int frame_0 = sfm_f[j].observation[0].first;

if (sfm_f[j].observation_depth[0].second < 0.1 || sfm_f[j].observation_depth[0].second > 10) //max and min measurement

continue;

point0 = Vector3d(sfm_f[j].observation[0].second.x()*sfm_f[j].observation_depth[0].second,sfm_f[j].observation[0].second.y()*sfm_f[j].observation_depth[0].second,sfm_f[j].observation_depth[0].second);

int frame_1 = sfm_f[j].observation.back().first;

point1 = sfm_f[j].observation.back().second;

Vector3d point_3d;

Matrix3d Pose0_R = Pose[frame_0].block< 3,3 >(0,0);

Matrix3d Pose1_R = Pose[frame_1].block< 3,3 >(0,0);

Vector3d Pose0_t = Pose[frame_0].block< 3,1 >(0,3);

Vector3d Pose1_t = Pose[frame_1].block< 3,1 >(0,3);

Vector2d residual;

Vector3d point1_reprojected;

point_3d = Pose0_R.transpose()*point0 - Pose0_R.transpose()*Pose0_t;//point in world;

point1_reprojected = Pose1_R*point_3d+Pose1_t;

residual = point1 - Vector2d(point1_reprojected.x()/point1_reprojected.z(),point1_reprojected.y()/point1_reprojected.z());

if (residual.norm() < 1.0/460) {//reprojection error

增加李代数相关库sophus的头文件

#include <sophus/se3.h>

#include <sophus/so3.h>

using Sophus::SE3;

using Sophus::SO3;

solveRelativeRT()中,

该函数的目的是,根据两帧匹配对求解R和带尺度的t

加一个打印ros日志

if(inlier_cnt > 12)

return true;

else

return false;

if(inlier_cnt > 12) {

ROS_ERROR_STREAM("----------5points-----------");

ROS_ERROR_STREAM(ll.size());

ROS_ERROR_STREAM(inlier_cnt);

ROS_ERROR_STREAM(Rotation);

ROS_ERROR_STREAM(Translation);

return true;

}

else

return false;

定义新的solveRelativeRT(),PnP,3D-2D

原本的左帧中的特征点ll,改为3D的lll,存放3d坐标

后续不用E矩阵恢复,改用cv库的pnp,参考十四讲7.8实践代码和【OpenCV】solvePnPRansac /solvePnP 计算外参数,求解相机位姿_guoqiang_sunshine的博客-CSDN博客_opencv solvepnp 参数

bool MotionEstimator::solveRelativeRT_PNP(const vector<pair<Vector3d, Vector3d>> &corres, Matrix3d &Rotation, Vector3d &Translation)

{

vector<cv::Point3f> lll;

vector<cv::Point2f> rr;

for (int i = 0; i< int(corres.size()); i++)

{

if (corres[i].first(2) >0 && corres[i].second(2) >0 ) {

lll.push_back(cv::Point3f(corres[i].first(0), corres[i].first(1), corres[i].first(2)));

rr.push_back(cv::Point2f(corres[i].second(0) / corres[i].second(2),

corres[i].second(1) / corres[i].second(2)));

}

}

cv::Mat rvec,tvec,inliersArr;

cv::Mat cameraMatrix = (cv::Mat_<double>(3, 3) << 1, 0, 0, 0, 1, 0, 0, 0, 1);

cv::solvePnPRansac(lll, rr, cameraMatrix, cv::Mat(), rvec, tvec, false, 100, 1.0/460, 0.99,

inliersArr, cv::SOLVEPNP_ITERATIVE);//maybe don't need 100times

Vector3d tran(tvec.at<double>(0, 0), tvec.at<double>(1, 0), tvec.at<double>(2, 0));

Matrix3d rota = SO3(rvec.at<double>(0, 0), rvec.at<double>(1, 0), rvec.at<double>(2, 0)).matrix();

ROS_DEBUG_STREAM("-----------pnp-----------");

ROS_DEBUG_STREAM(lll.size());

ROS_DEBUG_STREAM(inliersArr.rows);

Rotation = rota.transpose();

Translation = -rota.transpose() * tran;

ROS_DEBUG_STREAM(Rotation);

ROS_DEBUG_STREAM(Translation);

return true;

}

- utility文件夹里的h、cpp文件和feature_tracker一样的改动

三、pose_graph

class KeyFrame里,

加上了EIGEN_MAKE_ALIGNED_OPERATOR_NEW,用来提供对齐重载new宏,否则会因为内存未对齐就计算而报错。详情Eigen 内存对齐 - 知乎

KeyFrame实例里加上了vector<cv::Point3f> &_point_3d_depth,储存特征点的深度值

public:

KeyFrame(double _time_stamp, int _index, Vector3d &_vio_T_w_i, Matrix3d &_vio_R_w_i, cv::Mat &_image,

...

vector<cv::Point2f> point_2d_norm;

public:

EIGEN_MAKE_ALIGNED_OPERATOR_NEW

KeyFrame(double _time_stamp, int _index, Vector3d &_vio_T_w_i, Matrix3d &_vio_R_w_i, cv::Mat &_image, vector<cv::Point3f> &_point_3d_depth,

...

vector<cv::Point2f> point_2d_norm;

vector<cv::Point3f> point_3d_depth;

???在pose_graph_node.cpp和pose_graph.cpp里用到

extern Eigen::Matrix<double, 3, 1> ti_d;

extern Eigen::Matrix<double, 3, 3> qi_d;

增加了点云pcl相关头文件;

增加了滤波算法的接口:void pclFilter(); 点云相关指针Ptr cloud、Ptr save_cloud

增加了八叉树相关指针* octree、成员变量m_octree、ros发布pub_octree

#define SHOW_L_EDGE true

...

CameraPoseVisualization* posegraph_visualization;

...

...

std::mutex m_drift;

...

ros::Publisher pub_path[10];

...

#include <sensor_msgs/PointCloud2.h>

#include <sensor_msgs/point_cloud_conversion.h>

#include <pcl_conversions/pcl_conversions.h>

#include <pcl/point_cloud.h>

#include <pcl/octree/octree.h>

#include <pcl/octree/octree_impl.h>

#include <pcl/io/pcd_io.h>

#define SHOW_L_EDGE false

...

CameraPoseVisualization* posegraph_visualization;

void pclFilter(bool flag);

...

pcl::octree::OctreePointCloudDensity<pcl::PointXYZ>* octree;

pcl::PointCloud<pcl::PointXYZ>::Ptr cloud;

pcl::PointCloud<pcl::PointXYZ>::Ptr save_cloud;

...

std::mutex m_drift;

std::mutex m_octree;

...

ros::Publisher pub_path[10];

ros::Publisher pub_octree;

实例化KeyFrame的时候,加上_point_3d_depth;

computeBRIEFPoint()中,

该函数的目的是,给回环额外提取500个fast特征点并计算描述子

在出错的情况下用goodFeaturesToTrack()计算500个shi-tomasi角点,改为先用Fast()计算Fast角点,给出警报,再用goodFeaturesToTrack()计算50个shi-tomasi角点,在0.1的 品质因子下;(???为啥)

KeyFrame::KeyFrame(double _time_stamp, int _index, Vector3d &_vio_T_w_i, Matrix3d &_vio_R_w_i, cv::Mat &_image,

vector<cv::Point3f> &_point_3d, vector<cv::Point2f> &_point_2d_uv, vector<cv::Point2f> &_point_2d_norm,

vector<double> &_point_id, int _sequence)

...

point_2d_norm = _point_2d_norm;

...

else

{

vector<cv::Point2f> tmp_pts;

cv::goodFeaturesToTrack(image, tmp_pts, 500, 0.01, 10);

for(int i = 0; i < (int)tmp_pts.size(); i++)

KeyFrame::KeyFrame(double _time_stamp, int _index, Vector3d &_vio_T_w_i, Matrix3d &_vio_R_w_i, cv::Mat &_image,

vector<cv::Point3f> &_point_3d_depth, vector<cv::Point3f> &_point_3d, vector<cv::Point2f> &_point_2d_uv,

vector<cv::Point2f> &_point_2d_norm, vector<double> &_point_id, int _sequence)

...

point_2d_norm = _point_2d_norm;

point_3d_depth = _point_3d_depth;

...

else

{

cv::FAST(image, keypoints, fast_th, true);

ROS_ERROR("num of FAST: %d",(int)keypoints.size());

keypoints.clear();

vector<cv::Point2f> tmp_pts;

cv::goodFeaturesToTrack(image, tmp_pts, 50, 0.1, 10);

ROS_ERROR("num of good features: %d",(int)tmp_pts.size());

for(int i = 0; i < (int)tmp_pts.size(); i++)

增加滤波器相关头文件;

增加深度信息ROS暂存消息器depth_buf;

增加pcl相关变量。(ti_d、qi_d装相机外参(如果已知))

...

queue<sensor_msgs::ImageConstPtr> image_buf;

...

#include <message_filters/subscriber.h>

#include <message_filters/time_synchronizer.h>

#include <message_filters/synchronizer.h>

#include <message_filters/sync_policies/approximate_time.h>

...

queue<sensor_msgs::ImageConstPtr> image_buf,depth_buf;

...

float PCL_MAX_DIST, PCL_MIN_DIST, RESOLUTION;

int U_BOUNDARY, D_BOUNDARY, L_BOUNDARY, R_BOUNDARY;

int PCL_DIST;

Eigen::Matrix<double, 3, 1> ti_d;

Eigen::Matrix<double, 3, 3> qi_d;

主文件int main(int argc, char **argv)中,

加入depth的topic;

加入pcl相关的文件、实例化稠密八叉树Octree、点云cloud、保存点云save_cloud,并分别标注指向地址;

将cv类型矩阵(相机外参)cv_qid,、cv_tid用cv2eigen()转成Eigen形式的qi_d、ti_d;(???是因为用了pnp来三角化么)

原本的ROS订阅图像sub_image,改为rgbd的写法;(和feature_tracker_node.cpp的主函数改法一样,参照 ros消息时间同步与回调_kint_zhao的博客-CSDN博客

)

std::string IMAGE_TOPIC;

...

if (LOOP_CLOSURE)

{

ROW = fsSettings["image_height"];

COL = fsSettings["image_width"];

...

fsSettings["image_topic"] >> IMAGE_TOPIC;

...

FileSystemHelper::createDirectoryIfNotExists(POSE_GRAPH_SAVE_PATH.c_str());

FileSystemHelper::createDirectoryIfNotExists(VINS_RESULT_PATH.c_str());

...

ros::Subscriber sub_image = n.subscribe(IMAGE_TOPIC, 2000, image_callback);

std::string IMAGE_TOPIC,DEPTH_TOPIC;

...

if (LOOP_CLOSURE)

{

ROW = fsSettings["image_height"];

COL = fsSettings["image_width"];

PCL_DIST = fsSettings["pcl_dist"];

U_BOUNDARY = fsSettings["u_boundary"];

D_BOUNDARY = fsSettings["d_boundary"];

L_BOUNDARY = fsSettings["l_boundary"];

R_BOUNDARY = fsSettings["r_boundary"];

PCL_MIN_DIST = fsSettings["pcl_min_dist"];

PCL_MAX_DIST = fsSettings["pcl_max_dist"];

RESOLUTION = fsSettings["resolution"];

posegraph.octree = new pcl::octree::OctreePointCloudDensity<pcl::PointXYZ>(RESOLUTION);

posegraph.cloud = pcl::PointCloud<pcl::PointXYZ>::Ptr(new pcl::PointCloud<pcl::PointXYZ>());

posegraph.save_cloud = pcl::PointCloud<pcl::PointXYZ>::Ptr(new pcl::PointCloud<pcl::PointXYZ>());

posegraph.octree->setInputCloud(posegraph.cloud);

posegraph.octree->addPointsFromInputCloud();

posegraph.octree->defineBoundingBox(-100, -100, -100, 100, 100, 100);

...

fsSettings["image_topic"] >> IMAGE_TOPIC;

fsSettings["depth_topic"] >> DEPTH_TOPIC;

...

cv::Mat cv_qid, cv_tid;

fsSettings["extrinsicRotation"] >> cv_qid;

fsSettings["extrinsicTranslation"] >> cv_tid;

cv::cv2eigen(cv_qid, qi_d);

cv::cv2eigen(cv_tid, ti_d);

...

message_filters::Subscriber<sensor_msgs::Image> sub_image(n, IMAGE_TOPIC, 1);

message_filters::Subscriber<sensor_msgs::Image> sub_depth(n, DEPTH_TOPIC, 1);

typedef message_filters::sync_policies::ApproximateTime<sensor_msgs::Image,sensor_msgs::Image> syncPolicy;

message_filters::Synchronizer<syncPolicy> sync(syncPolicy(10), sub_image, sub_depth);

sync.registerCallback(boost::bind(&image_callback, _1, _2));

new_sequence()中,

该函数的目的是,开始一个新的图像序列(地图合并功能);

加入depth消息容器的清理。

while(!depth_buf.empty())

depth_buf.pop();

image_callback()中,

加入深度相关消息。

void image_callback(const sensor_msgs::ImageConstPtr &image_msg)

...

m_buf.lock();

image_buf.push(image_msg);

m_buf.unlock();

void image_callback(const sensor_msgs::ImageConstPtr &image_msg, const sensor_msgs::ImageConstPtr &depth_msg)

...

m_buf.lock();

image_buf.push(image_msg);

depth_buf.push(depth_msg);

m_buf.unlock();

回环主线程 process()中,

加入深度消息指针depth_msg,depth_buf的一系列处理跟着image_buf走;

sensor_msgs::ImageConstPtr image_msg = NULL;

...

while (image_buf.front()->header.stamp.toSec() < pose_msg->header.stamp.toSec())

image_buf.pop();

image_msg = image_buf.front();

image_buf.pop();

sensor_msgs::ImageConstPtr image_msg = NULL;

sensor_msgs::ImageConstPtr depth_msg = NULL;

...

while (image_buf.front()->header.stamp.toSec() < pose_msg->header.stamp.toSec())

{

image_buf.pop();

depth_buf.pop();

}

image_msg = image_buf.front();

depth_msg = depth_buf.front();

image_buf.pop();

depth_buf.pop();

增加以下,把depth的ros message也转成cv::Mat,放在image里,创造depth_ptr指针;(这里和feature_tracker_node.cpp的image_callback()一样的改法)

ptr = cv_bridge::toCvCopy(image_msg, sensor_msgs::image_encodings::MONO8);

...

cv::Mat image = ptr->image;

ptr = cv_bridge::toCvCopy(image_msg, sensor_msgs::image_encodings::MONO8);

cv_bridge::CvImageConstPtr depth_ptr;

{

sensor_msgs::Image img;

img.header = depth_msg->header;

img.height = depth_msg->height;

img.width = depth_msg->width;

img.is_bigendian = depth_msg->is_bigendian;

img.step = depth_msg->step;

img.data = depth_msg->data;

img.encoding = sensor_msgs::image_encodings::MONO16;

depth_ptr = cv_bridge::toCvCopy(img, sensor_msgs::image_encodings::MONO16);

}

...

cv::Mat image = ptr->image;

cv::Mat depth = depth_ptr->image;

增加定义特征点3d坐标的深度point_3d_depth容器;

在创建回环检测节点的KF前,将判断正确的深度值返回point_3d_depth容器;

判断方法:遍历所有点云,将像素坐标a转化为无畸变的归一化b(3d)。如果depth_val在阈值内,则将depth_val和b一起返回到point_3d_depth容器

实例化加入了深度值的KeyFrame为KeyFrame* keyframe。

vector<cv::Point2f> point_2d_normal;

...

KeyFrame* keyframe = new KeyFrame(pose_msg->header.stamp.toSec(), frame_index, T, R, image,

point_3d, point_2d_uv, point_2d_normal, point_id, sequence);

vector<cv::Point2f> point_2d_normal;

vector<cv::Point3f> point_3d_depth;

...

for (int i = L_BOUNDARY; i < COL - R_BOUNDARY; i += PCL_DIST)

{

for (int j = U_BOUNDARY; j < ROW - D_BOUNDARY; j += PCL_DIST)

{

Eigen::Vector2d a(i, j);

Eigen::Vector3d b;

m_camera->liftProjective(a, b);

float depth_val = ((float)depth.at<unsigned short>(j, i)) / 1000.0;

if (depth_val > PCL_MIN_DIST && depth_val < PCL_MAX_DIST)

{

point_3d_depth.push_back(cv::Point3f(b.x() * depth_val, b.y() * depth_val, depth_val));

}

}

}

KeyFrame* keyframe = new KeyFrame(pose_msg->header.stamp.toSec(), frame_index, T, R, image, point_3d_depth,

point_3d, point_2d_uv, point_2d_normal, point_id, sequence);

void command()中,

加入键盘控制"p"非pcl滤波模式、"d"保存点云为pcd格式

if (c == 'n')

new_sequence();

if (c == 'n')

new_sequence();

if (c == 'p')

{

TicToc t_filter;

posegraph.pclFilter(false);

printf("pclFilter time: %f", t_filter.toc());

}

if (c == 'd')

{

TicToc t_pcdfile;

posegraph.save_cloud->width = posegraph.save_cloud->points.size();

posegraph.save_cloud->height = 1;

pcl::io::savePCDFileASCII("/home/riger/pcd_file_"+to_string(frame_index)+"keyframes.pcd", *(posegraph.save_cloud));

printf("Save pcd file done! Time cost: %f", t_pcdfile.toc());

}

参考:PCL filter 点云滤波汇总_yamgyutou的博客-CSDN博客

加入声明外部变量RESOLUTION,分辨率参数,用于描述最低一级八叉树的最小体素的尺寸

extern float RESOLUTION;

registerPub()中

该函数的目的是,注册一些发布publisher。

加入八叉树的发布pub_octree。

pub_pose_graph = n.advertise<visualization_msgs::MarkerArray>("pose_graph", 1000);

pub_pose_graph = n.advertise<visualization_msgs::MarkerArray>("pose_graph", 1000);

pub_octree = n.advertise<sensor_msgs::PointCloud2>("octree", 1000);

addKeyFrame()中,

该函数的目的是,添加关键帧,完成回环检测与闭环的过程。

在可视化部分中,在发布path[sequence_cnt]之后添加点云可视化???

path[sequence_cnt].poses.push_back(pose_stamped);

path[sequence_cnt].header = pose_stamped.header;

path[sequence_cnt].poses.push_back(pose_stamped);

path[sequence_cnt].header = pose_stamped.header;

sensor_msgs::PointCloud2 tmp_pcl;//准备雷达点云格式转换

m_octree.lock();//八叉树容器锁上,不再往里加点云

int pcl_count_temp = 0;//点云索引值

for (unsigned int i = 0; i < cur_kf->point_3d_depth.size(); i++)//遍历当前帧所有特征点的3d坐标

{

cv::Point3f pcl = cur_kf->point_3d_depth[i];//临时存放某点坐标

Vector3d pts_i(pcl.x , pcl.y, pcl.z);//相机坐标系下的某点坐标

Vector3d w_pts_i = R * (qi_d * pts_i + ti_d) + P;//世界坐标系下的某点坐标

//为searchPoint点云填充点数据,由于上面的遍历,属于是循环填充数据了

pcl::PointXYZ searchPoint;//把某点的世界坐标系3d坐标给到pcl格式的searchPoint容器里,后续好转化为ROS格式

searchPoint.x = w_pts_i(0);

searchPoint.y = w_pts_i(1);

searchPoint.z = w_pts_i(2);

if (octree->getVoxelDensityAtPoint(searchPoint) < 5)//getVoxelDensityAtPoint(searchPoint):返回searchPoint点所在的叶节点的密度,即点云的个数。???这里是为了筛选合格的体素格,格内点云数小于5才能通过,否则还得继续分格子。

{

cur_kf->point_3d_depth[pcl_count_temp] = pcl;//合格格子内的点云坐标放回point_3d_depth

octree->addPointToCloud(searchPoint, cloud);//添加点searchPoint到点云cloud中

++pcl_count_temp;

}

}

cur_kf->point_3d_depth.resize(pcl_count_temp);//point_3d_depth重新统计特征点数目

pcl::toROSMsg(*(octree->getInputCloud()), tmp_pcl);//将sensor_msgs::PointCloud2转pcl::PointCloud,才能被ROS调用。直接调用pcl自带的函数

m_octree.unlock();//八叉树容器解锁,可以往里加点云

在ROS中订阅点云话题的时候,需要先将数据类型转换成PCL格式之后再做操作。这里直接调用pcl自带的函数pcl::fromROSMsg(),转成pcl点云,再进行降采样。???可以试试改成void *memcpy(void *dest, const void *src, size_t n);直接从地址提取部分点云,可以有效节省时间

pcl::toROSMsg(*(octree->getInputCloud()), tmp_pcl);//将sensor_msgs::PointCloud2转pcl::PointCloud,才能被ROS调用。直接调用pcl自带的函数

函数的最后,对齐pcl和pose的时间戳、关键帧id、原始数据类型,以及发布pub_octree八叉树话题

keyframelist.push_back(cur_kf);

publish();

m_keyframelist.unlock();

tmp_pcl.header = pose_stamped.header;

keyframelist.push_back(cur_kf);

publish();

pub_octree.publish(tmp_pcl);

m_keyframelist.unlock();

updatePath()中,

该函数的目的是,更新轨迹并发布。

加入TicToc t_update,因为存储更新 pcl 的信息而不锁定关键帧列表keyframe list,这可能需要很多时间 ;

加入tmp_keyframelist(临时装point_3d_depth)、tmp_RTlist(临时装配对的位姿P、R)、tmp_header(对齐?和pose的时间戳、关键帧id、原始数据类型);

...

(*it)->getPose(P, R);

...

pose_stamped.pose.orientation.w = Q.w();

...

path[(*it)->sequence].header = pose_stamped.header;

TicToc t_update;

vector<vector<cv::Point3f>> tmp_keyframelist;

queue<pair<Matrix3d, Vector3d>> tmp_RTlist;

std_msgs::Header tmp_header;

...

(*it)->getPose(P, R);

tmp_RTlist.push(make_pair(R, P));

...

pose_stamped.pose.orientation.w = Q.w();

tmp_keyframelist.push_back((*it)->point_3d_depth);

...

path[(*it)->sequence].header = pose_stamped.header;

tmp_header = pose_stamped.header;

加入octree八叉树和pcl部分,

参考PCL常用代码总结(二):kdtree & octree | 码农家园

以下是,将花费算力多的扔出m_keyframelist。

publish();

m_keyframelist.unlock();

publish();

m_keyframelist.unlock();

m_octree.lock();

octree->deleteTree();

cloud->clear();

//添加cloud到八叉树,建立八叉树

octree->setInputCloud(cloud);//传入需要建立kdtree的点云指针

octree->addPointsFromInputCloud(); //构建Octree

//定义八叉树的包围盒

octree->defineBoundingBox(-100, -100, -100, 100, 100, 100);

int update_count = 0;//更新次数

for (auto &pcl_vect : tmp_keyframelist)//遍历tmp_keyframelist,取向量为pcl_vect

{

Vector3d P;

Matrix3d R;

//每个元素的P、R放入tmp_RTlist最前端,往前顶

R = tmp_RTlist.front().first;

P = tmp_RTlist.front().second;

for (auto &pcl : pcl_vect)//遍历pcl_vect,取向量为pcl。下面的就和addKeyFrame()一样,填充数据

{

Vector3d pts_i(pcl.x , pcl.y, pcl.z);

Vector3d w_pts_i = R * (qi_d * pts_i + ti_d) + P;

pcl::PointXYZ searchPoint;

searchPoint.x = w_pts_i(0);

searchPoint.y = w_pts_i(1);

searchPoint.z = w_pts_i(2);

++update_count;

octree->addPointToCloud(searchPoint, cloud);

}

tmp_RTlist.pop();

}

pclFilter(true);//滤波器模式

m_octree.unlock();

ROS_INFO("Update done! Time cost: %f total points: %d", t_update.toc(), update_count);

void PoseGraph::pclFilter(bool flag)是新增加的,在updatePath()最后部分用到

该函数的目的是,???对点云降采样,太稠密了计算机算不过来。

void PoseGraph::pclFilter(bool flag)

{

pcl::PointCloud<pcl::PointXYZ> cloud_copy(*(octree->getInputCloud()));//octree的点云数据装入cloud_copy

pcl::octree::OctreePointCloudDensity<pcl::PointXYZ>* temp_octree = new pcl::octree::OctreePointCloudDensity<pcl::PointXYZ>(RESOLUTION);//实例化一个新的八叉树

pcl::PointCloud<pcl::PointXYZ>::Ptr temp_cloud = pcl::PointCloud<pcl::PointXYZ>::Ptr(new pcl::PointCloud<pcl::PointXYZ>());//设定pcl指针temp_cloud指向点云的坐标容器,即将点云转换为指针格式

cout<<"Size of Cloud before filter:"<<cloud_copy.size()<<endl;

pcl::PointCloud<pcl::PointXYZ>::iterator pcl_iter= cloud_copy.begin();//取排头的点云为pcl_iter

while(pcl_iter != cloud_copy.end())//循环处理cloud_copy中每个点云

{

// 体素格内的点云数大于3,该点云才返回到temp_cloud里

if (octree->getVoxelDensityAtPoint(*pcl_iter) >= 3)

{

temp_cloud->push_back(*pcl_iter);

}

++pcl_iter;

}

temp_octree->defineBoundingBox(-100, -100, -100, 100, 100, 100);

temp_octree->setInputCloud(temp_cloud);//传入需要建立kdtree的点云指针

temp_octree->addPointsFromInputCloud();//构建temp_octree

cout<<"Size of Cloud after filter:"<<temp_cloud->size()<<endl;

if (flag)

{

//lock octree and cloud

delete octree;

(*cloud).clear();

octree = temp_octree;

cloud = temp_cloud;

}

else//不会用到

{

sensor_msgs::PointCloud2 tmp_pcl;

pcl::toROSMsg(*temp_cloud, tmp_pcl);

tmp_pcl.header.stamp = ros::Time::now();

tmp_pcl.header.frame_id = "world";

pub_octree.publish(tmp_pcl);

}

}

savePoseGraph()中,

增加了轨迹文件file_path_shan_pg、file_path_shan_vio。???显示自己跑的数据集的轨迹

具体看代码

结束

至此,rgbd添加结束。后续会试试算法裁剪和加线特征

本文内容由网友自发贡献,版权归原作者所有,本站不承担相应法律责任。如您发现有涉嫌抄袭侵权的内容,请联系:hwhale#tublm.com(使用前将#替换为@)